/image.png (optional)

---

```

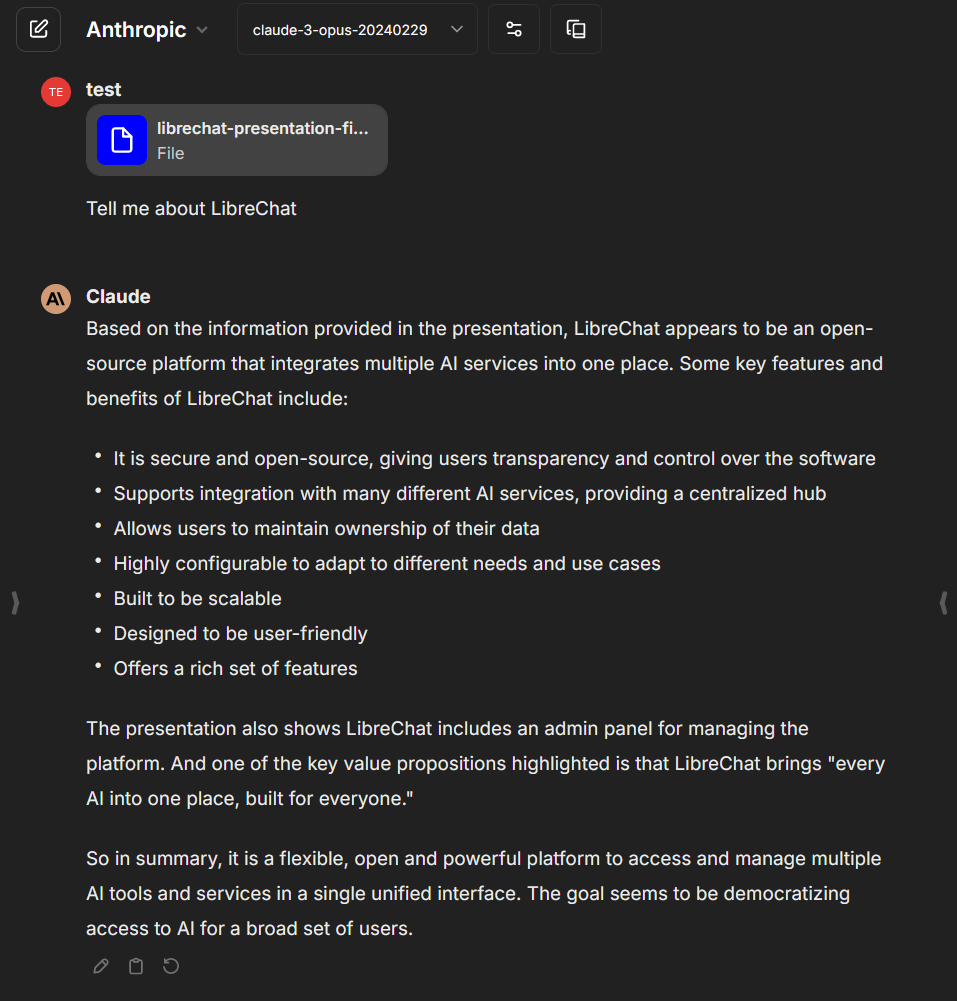

## Assets

Whenever possible, upload assets (e.g., images) to GitHub instead of storing them in the `/public` folder. This helps keep your repository organized and makes it easier to manage your assets.

### Images

**Note: In the following example:**

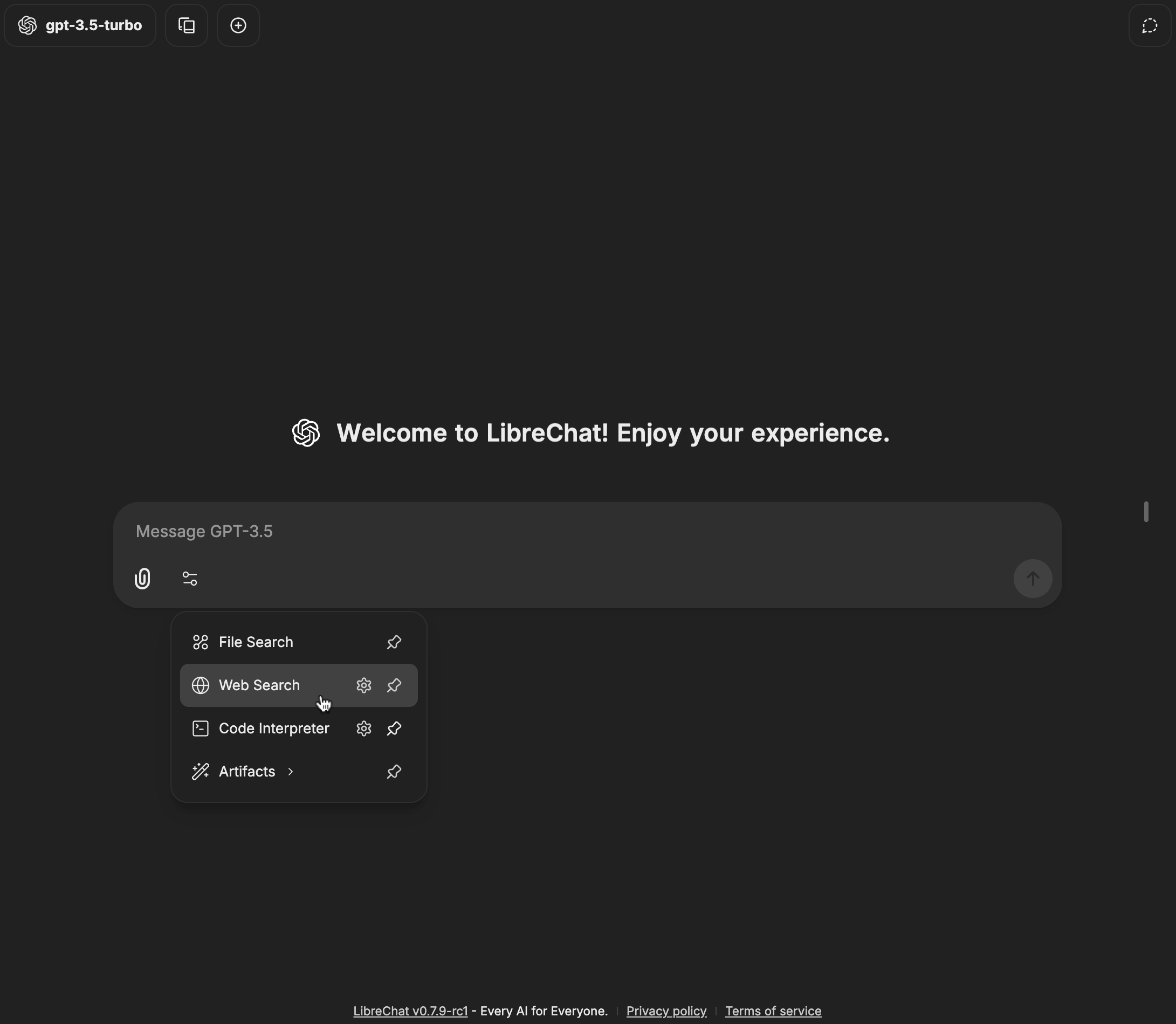

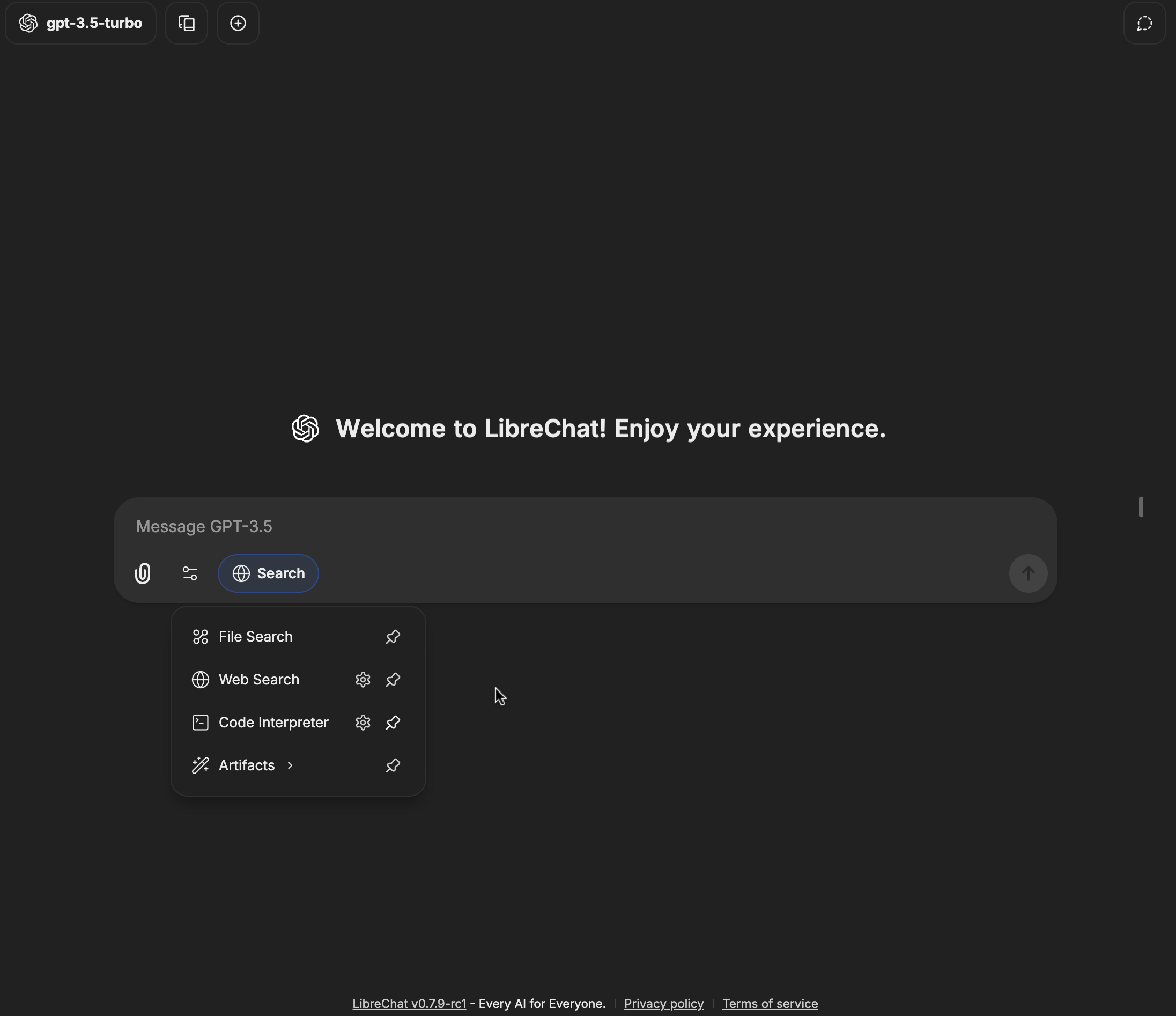

- I provided screenshots for both light and dark mode.

- I used `Image from 'next/image'` which gave me 4x improvement on the image file size for better performance.

see the following example in action here: [User Guides](/docs/user_guides)

```mdx filename="Example"

import Image from 'next/image'

```

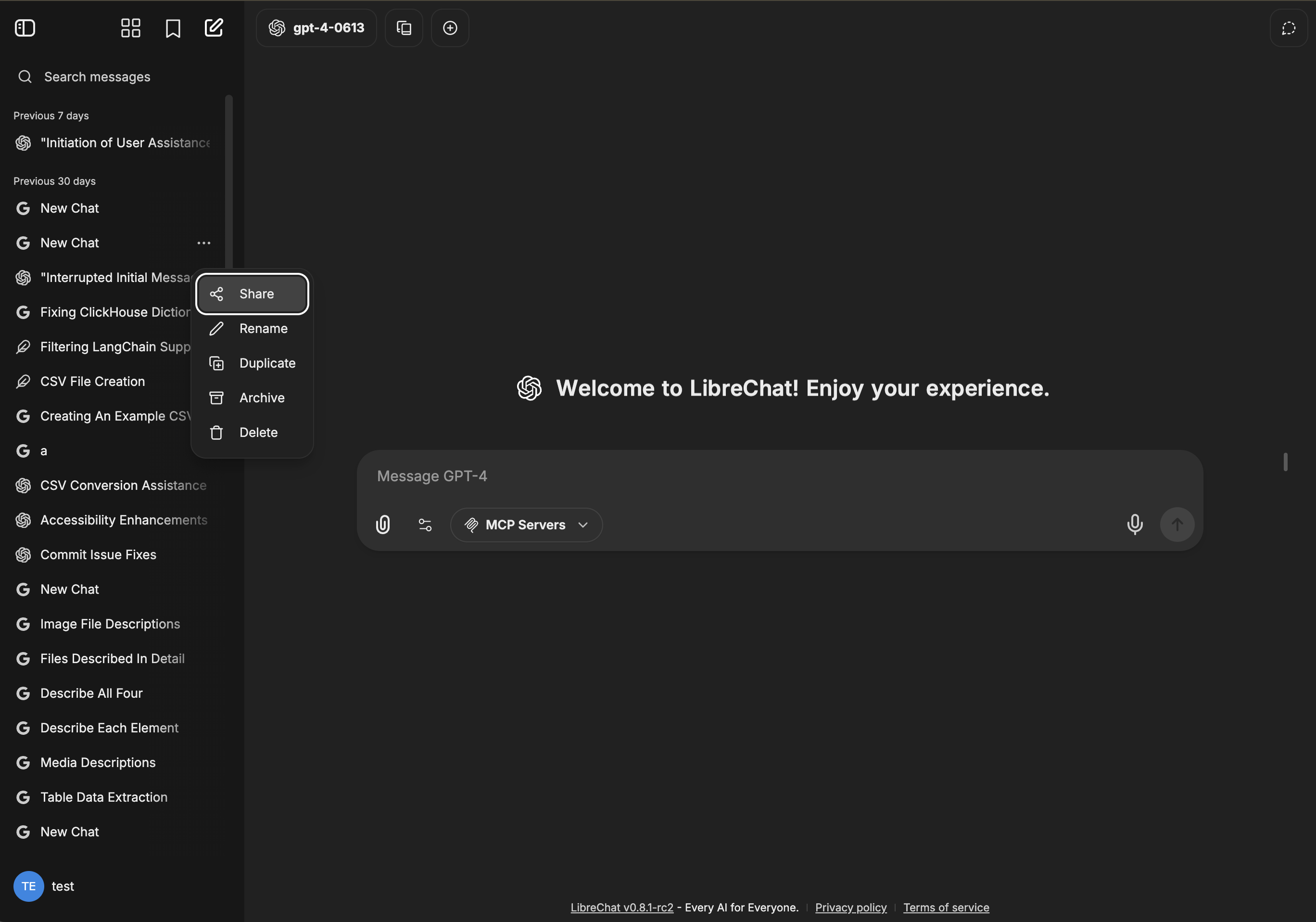

### How to Upload Images and Videos on GitHub

- Go to the LibreChat repository

- Find a random conversation or PR

- Paste an image from your clipboard into the text input box. It will automatically be converted into a URL.

- Copy and paste the resulting URL in your document. (Then exit the page without actually posting the comment.😉)

- Upload directly from the web UI:

## Test the Docs

### Review carefully before submitting your PR

Before submitting a PR for your blog post, **always** test to ensure everything looks and functions as intended.

#### Check the following:

- Your new document(s) for layout, accuracy and completeness

- The document's position in the Table of Contents (ToC)

- The image and link in your document

#### To test:

1. Prepare the environment by running `pnpm install`

2. Start the dev server with `pnpm dev`

3. Test the build by running `pnpm build` followed by `pnpm start`

# Syntax Highlighting (/docs/documentation/syntax_highlighting)

{/* TODO: This page contained Nextra-specific interactive examples with React hooks

(useRef, useEffect, useState) that are incompatible with Fumadocs Server Components.

The page needs to be rewritten with Fumadocs syntax highlighting examples. */}

This documentation uses [Shiki](https://shiki.matsu.io) for syntax highlighting at build time.

## Basic Usage

Add code blocks using triple backticks with a language identifier:

````md

```js

console.log('hello, world')

```

````

Renders as:

```js

console.log('hello, world')

```

## Supported Languages

All languages supported by Shiki are available. Common ones include:

- `js` / `javascript`

- `ts` / `typescript`

- `jsx` / `tsx`

- `bash` / `sh`

- `json`

- `yaml`

- `python`

- `go`

- `rust`

- `css`

- `html`

- `markdown` / `md`

- `sql`

- `dockerfile`

## Filenames

You can add a filename to code blocks:

````md

```js filename="example.js"

console.log('hello')

```

````

## Line Highlighting

Highlight specific lines:

````md

```js {1,3-4}

import React from 'react'

function Hello() {

return Hello

}

```

````

# Agents (/docs/features/agents)

# Agents: Build Custom AI Assistants

LibreChat's AI Agents feature provides a flexible framework for creating custom AI assistants powered by various model providers.

This feature is similar to OpenAI's Assistants API and ChatGPT's GPTs, but with broader model support and a no-code implementation, letting you build sophisticated assistants with specialized capabilities.

## Getting Started

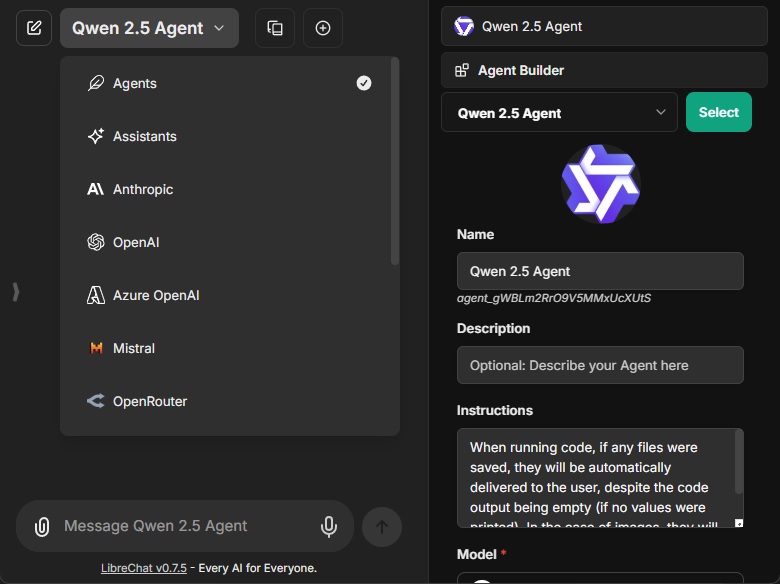

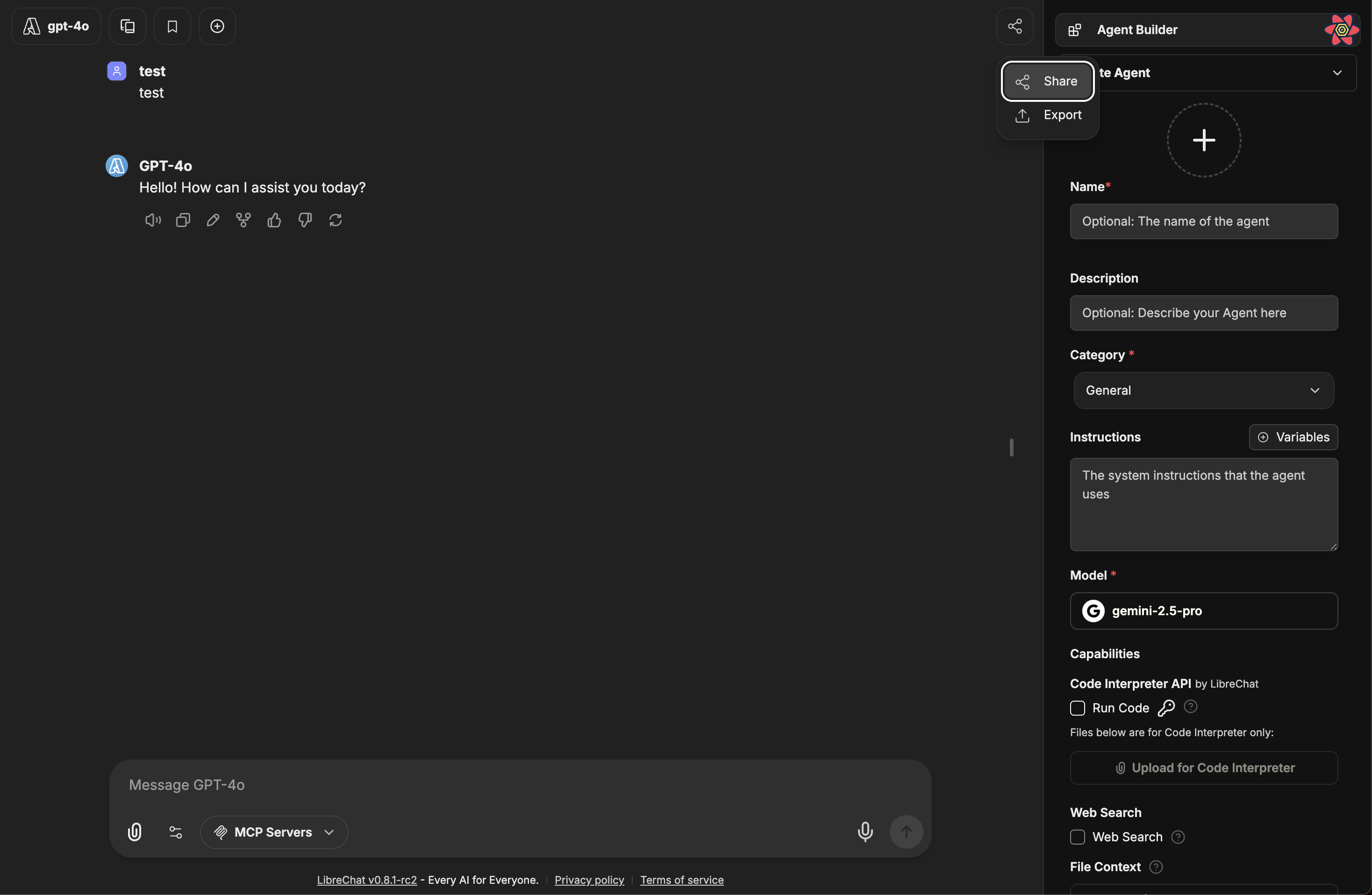

To create a new agent, select "Agents" from the endpoint menu and open the Agent Builder panel found in the Side Panel.

The creation form includes:

- **Avatar**: Upload a custom avatar to personalize your agent

- **Name**: Choose a distinctive name for your agent

- **Description**: Optional details about your agent's purpose

- **Instructions**: System instructions that define your agent's behavior

- **Model**: Select from available providers and models

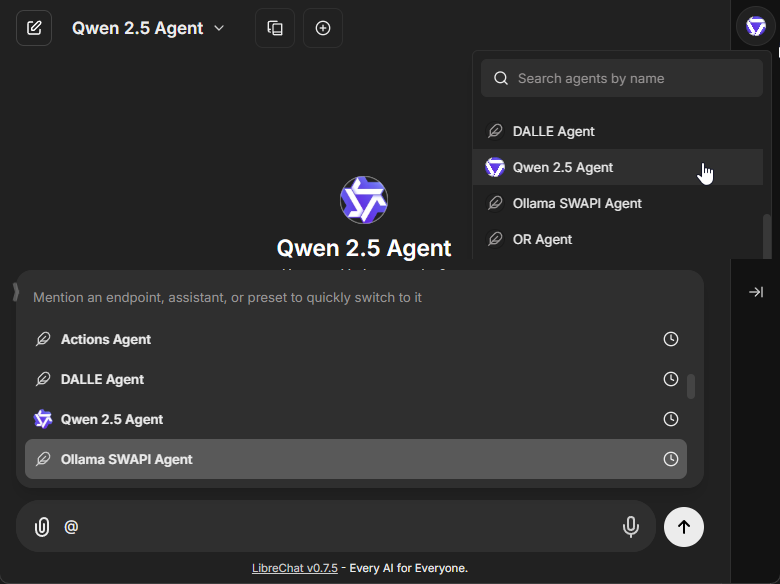

**Existing agents can be selected from the top dropdown of the Side Panel.**

- **Also by mention with "@" in the chat input.**

### Model Configuration

The model parameters interface allows fine-tuning of your agent's responses:

- Temperature (0-1 scale for response creativity)

- Max context tokens

- Max output tokens

- Additional provider-specific settings

## Agent Capabilities

> **Note:** All capabilities can be toggled via the `librechat.yaml` configuration file. See [docs/configuration/librechat_yaml/object_structure/agents#capabilities](/docs/configuration/librechat_yaml/object_structure/agents#capabilities) for more information.

### Code Interpreter

When enabled, the Code Interpreter capability allows your agent to:

- Execute code in multiple languages, including:

- Python, JavaScript, TypeScript, Go, C, C++, Java, PHP, Rust, and Fortran

- Process files securely through the LibreChat Code Interpreter API

- Run code without local setup, configuration, or sandbox deployment

- Handle file uploads and downloads seamlessly

- [More info about the Code Interpreter API](/docs/features/code_interpreter)

- **Requires an API Subscription from [code.librechat.ai](https://code.librechat.ai/pricing)**

### File Search

The File Search capability enables:

- RAG (Retrieval-Augmented Generation) functionality

- Semantic search across uploaded documents

- Context-aware responses based on file contents

- File attachment support at both agent and chat thread levels

### File Context

The File Context capability allows your agent to store extracted text from files as part of its system instructions:

- Extract text while maintaining document structure and formatting

- Process complex layouts including multi-column text and mixed content

- Handle tables, equations, and other specialized content

- Work with multilingual content

- Text is stored in the agent's instructions in the database

- **No OCR service required** - Uses text parsing by default with fallback methods

- **Enhanced by OCR** - If OCR is configured, extraction quality improves for images and scanned documents

- Uses the same processing logic as "Upload as Text": **OCR > STT > text parsing**

- [More info about OCR configuration](/docs/features/ocr)

**Note:** File Context includes extracted text in the agent's system instructions. For temporary document questions in individual conversations, use [Upload as Text](/docs/features/upload_as_text) from the chat instead.

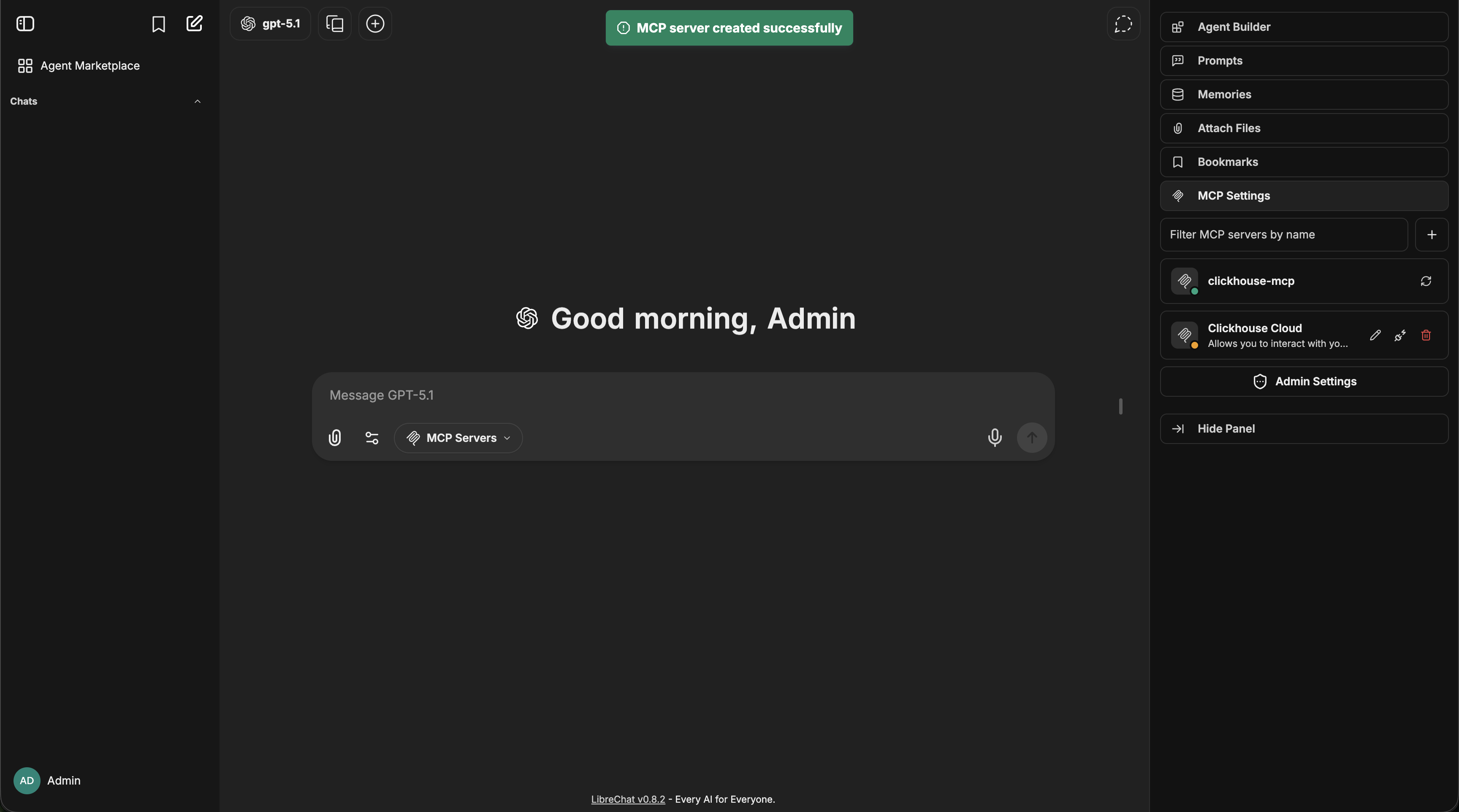

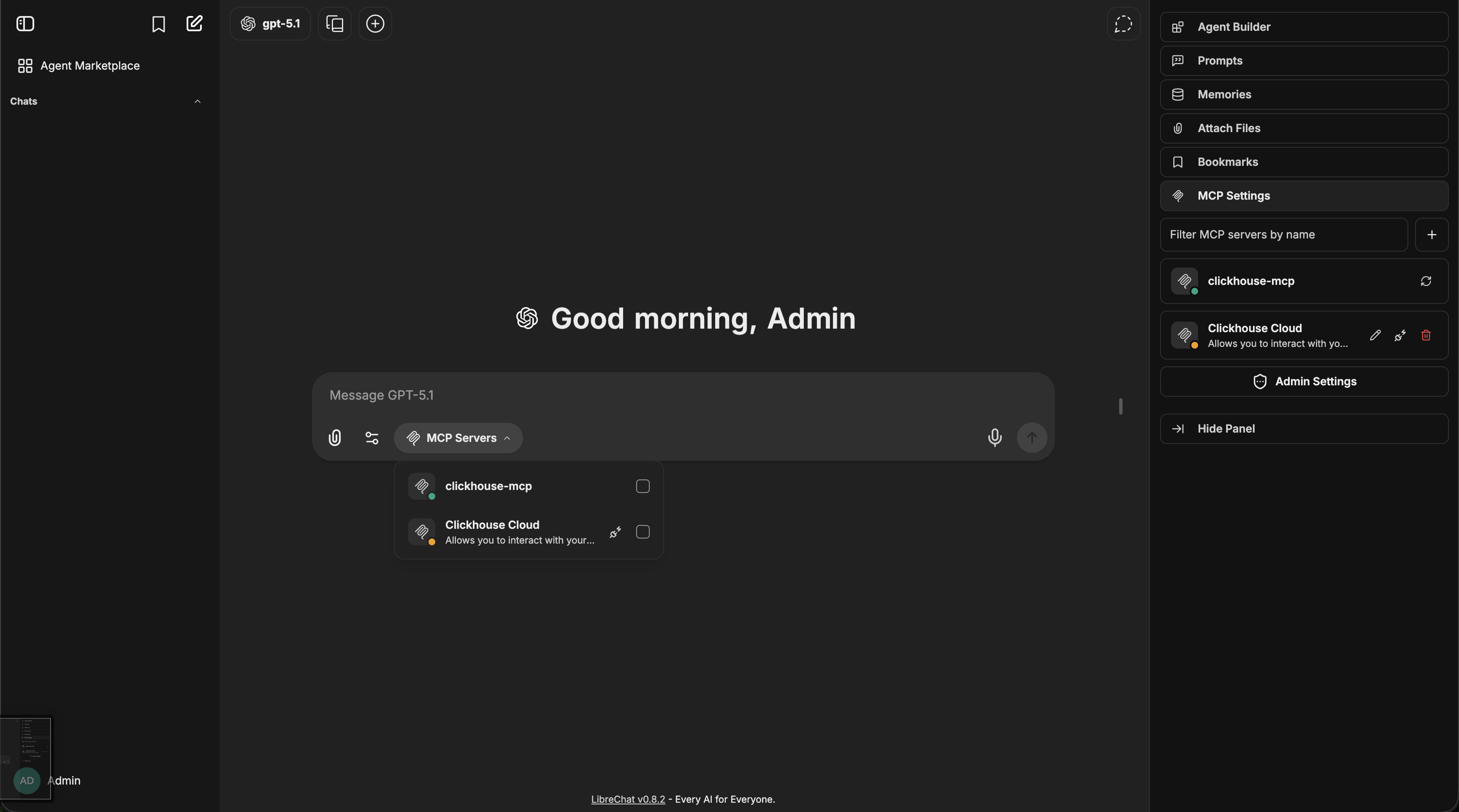

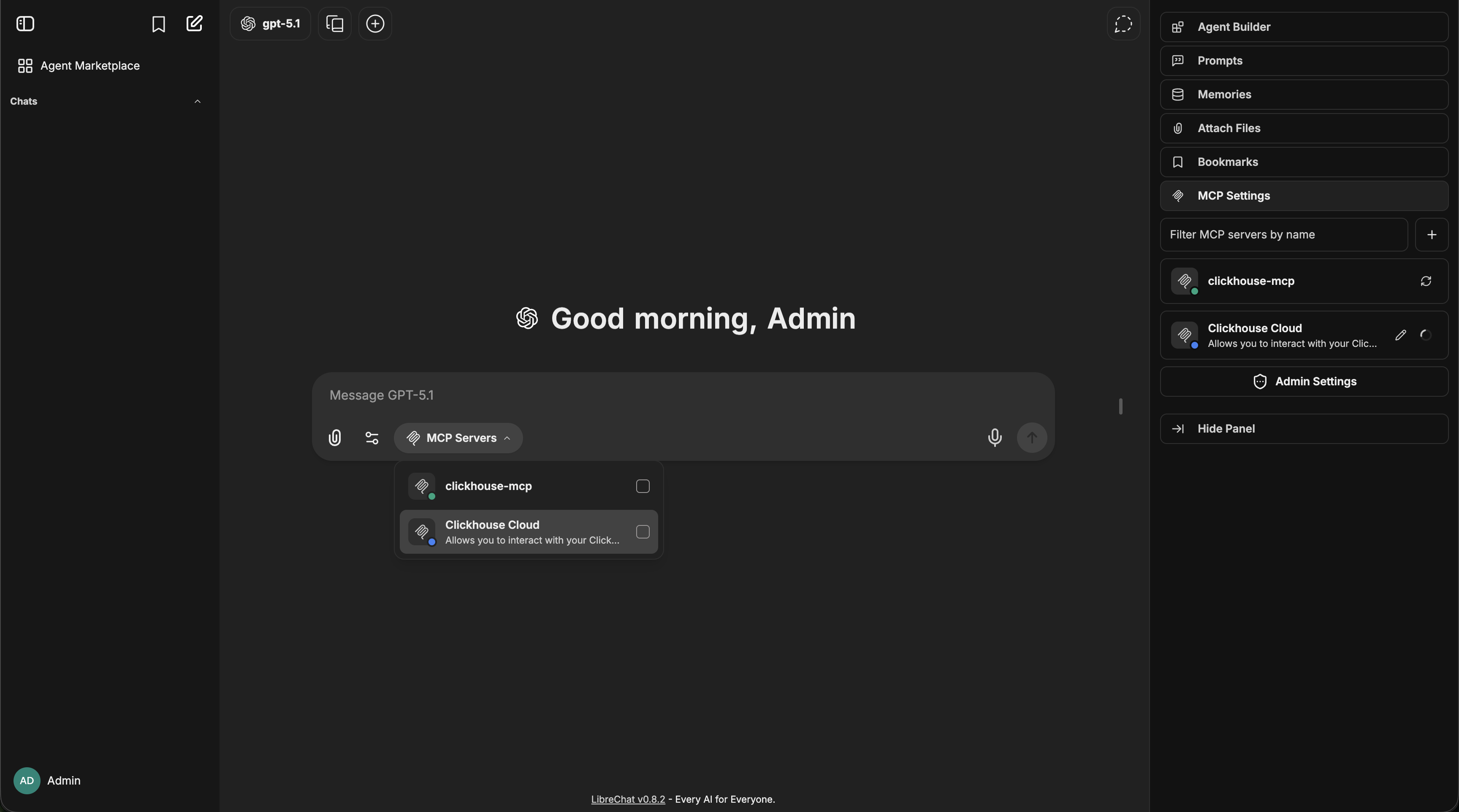

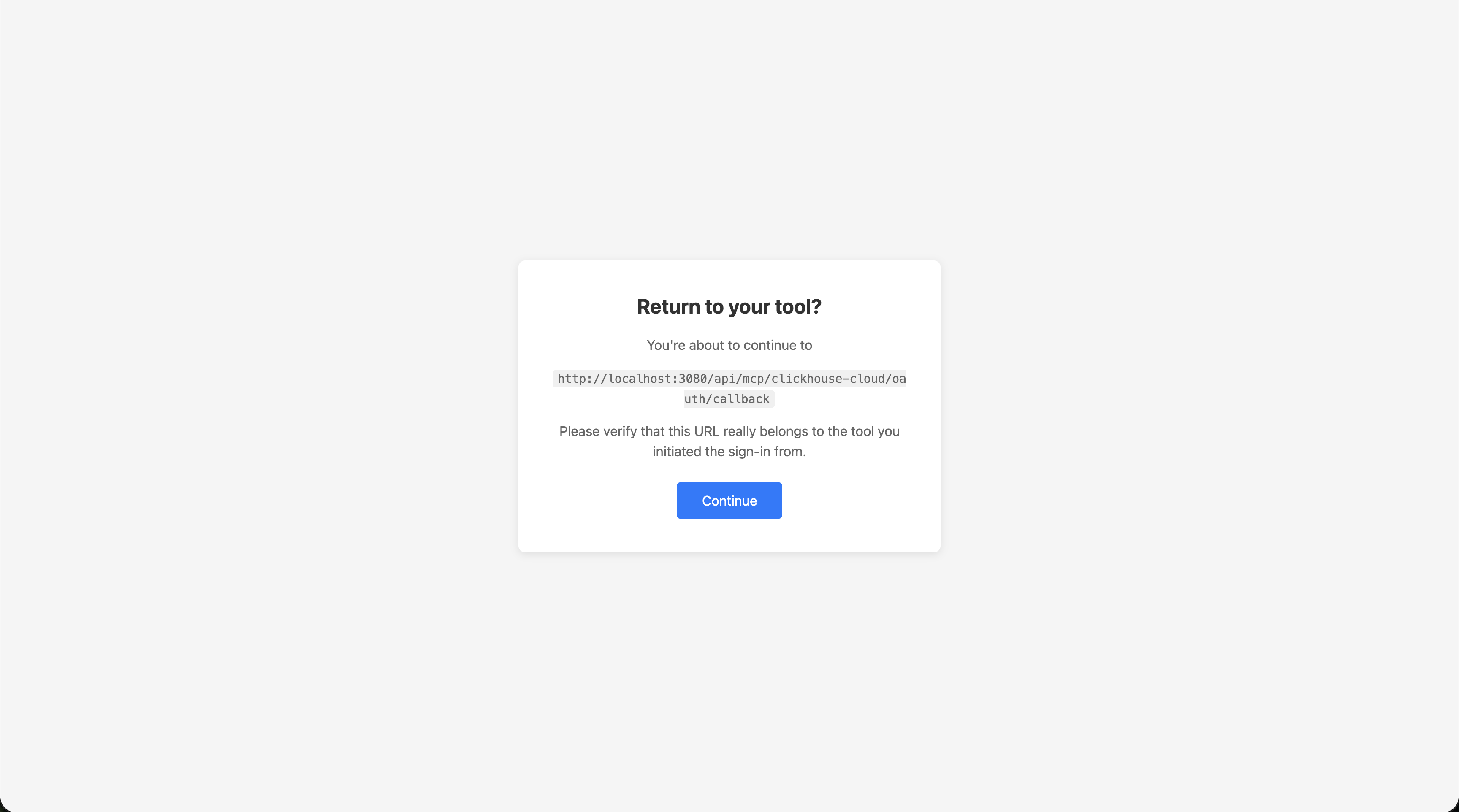

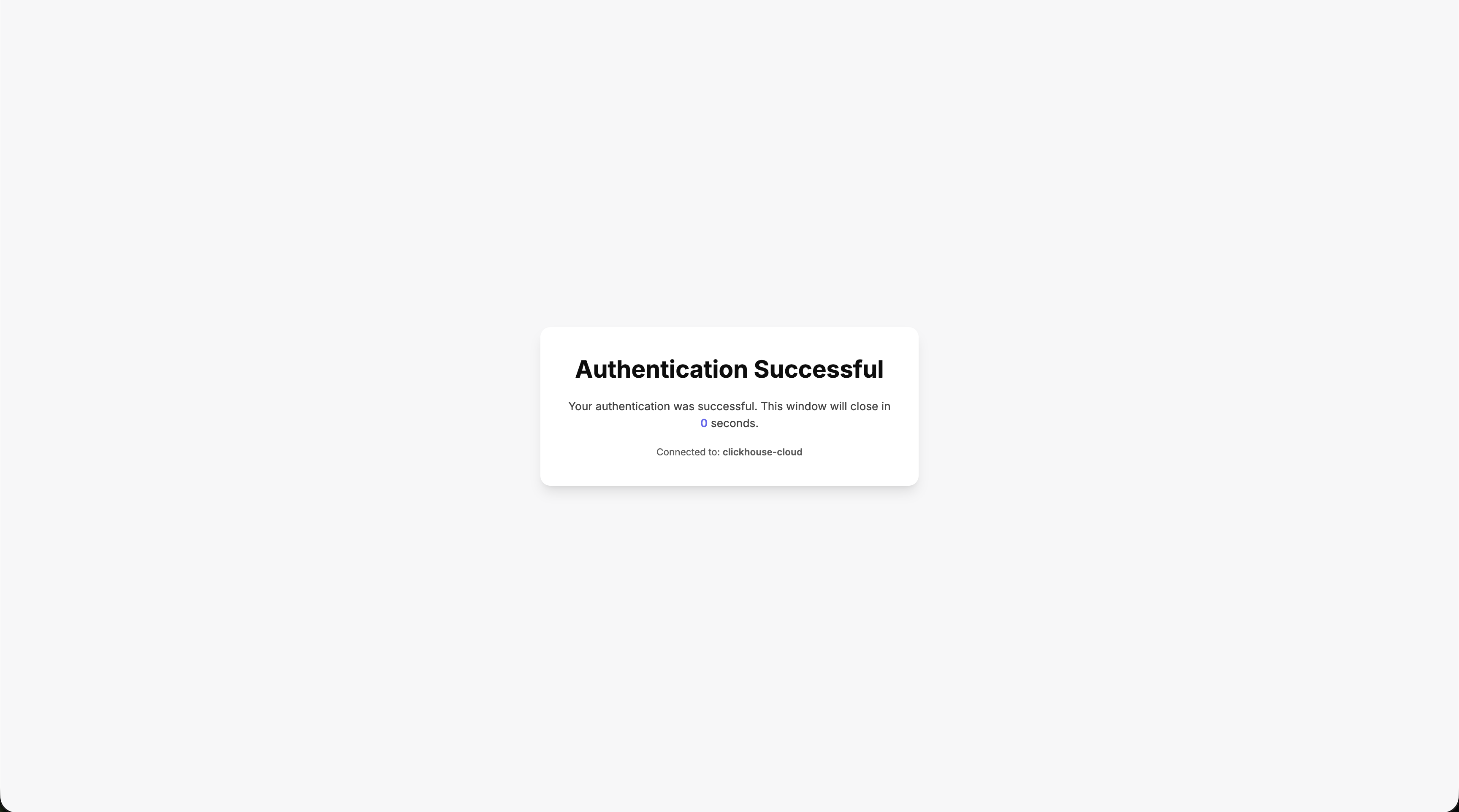

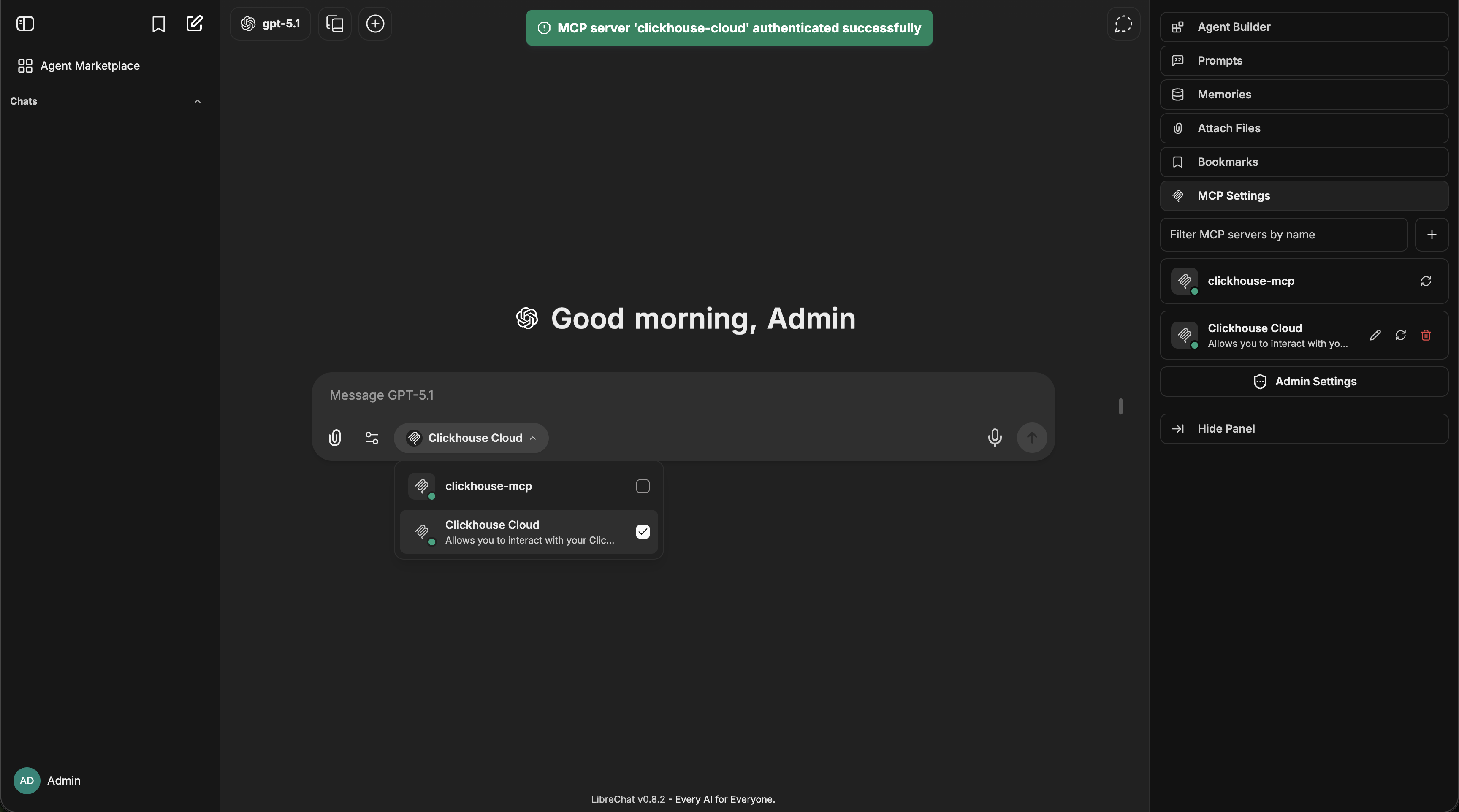

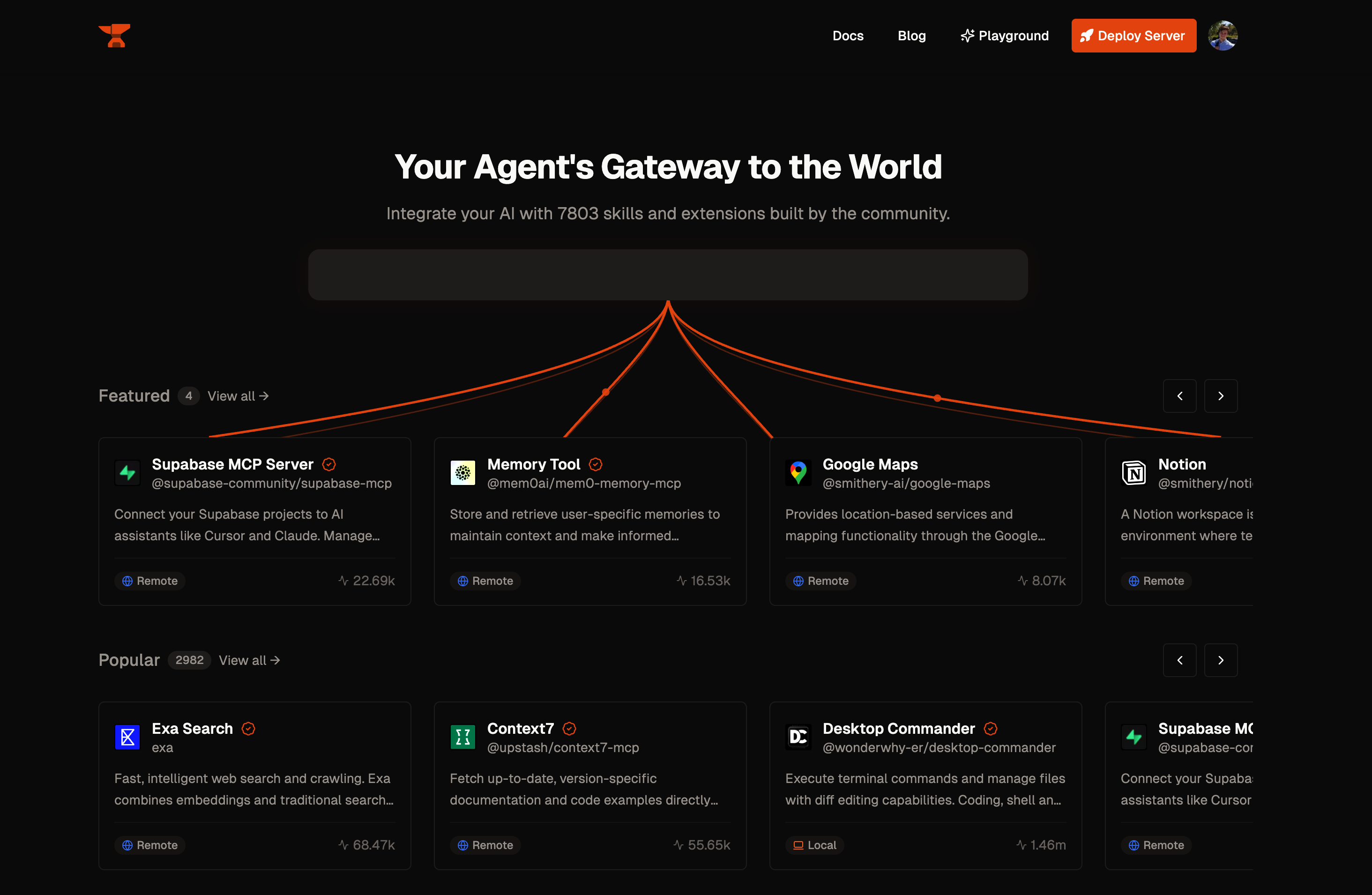

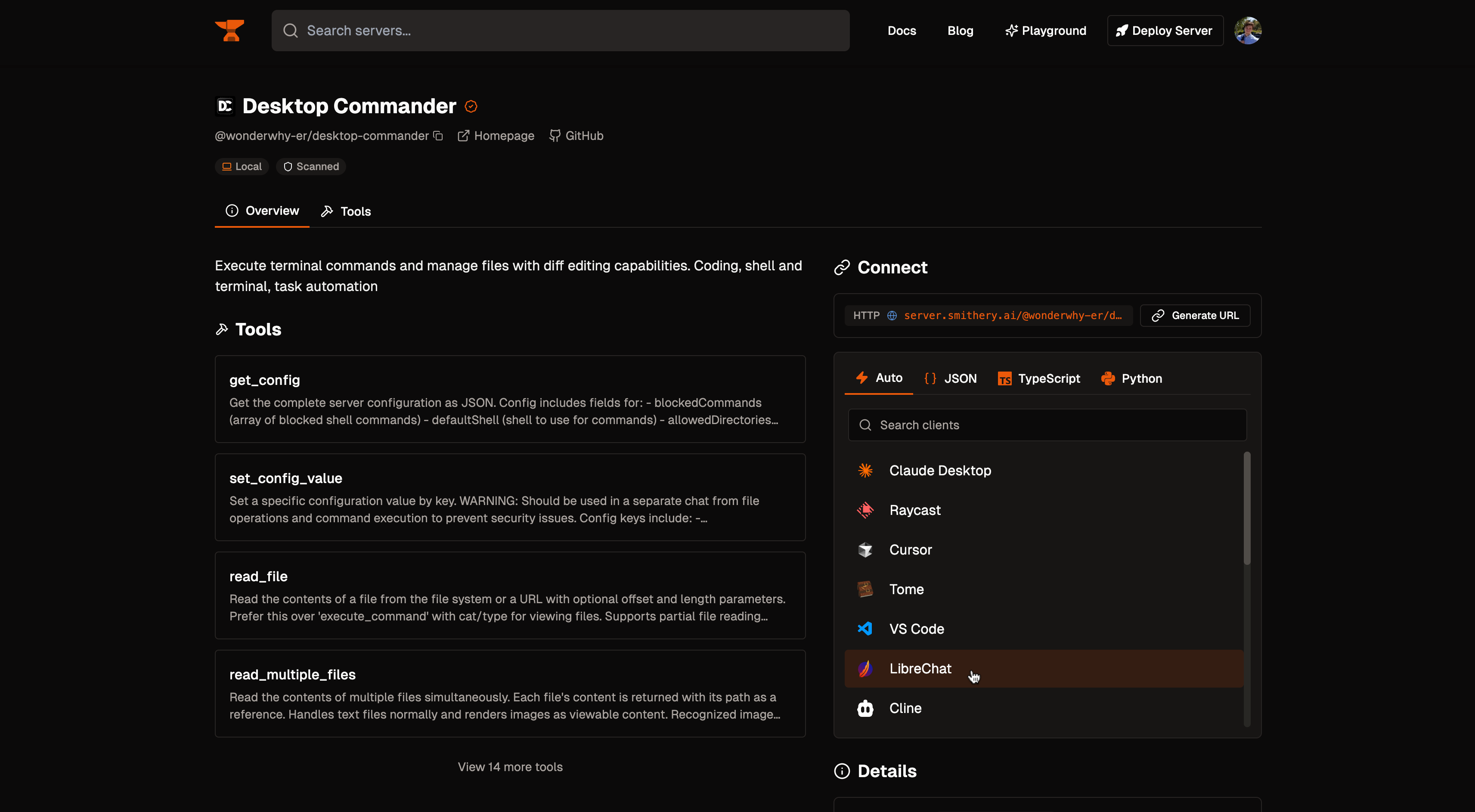

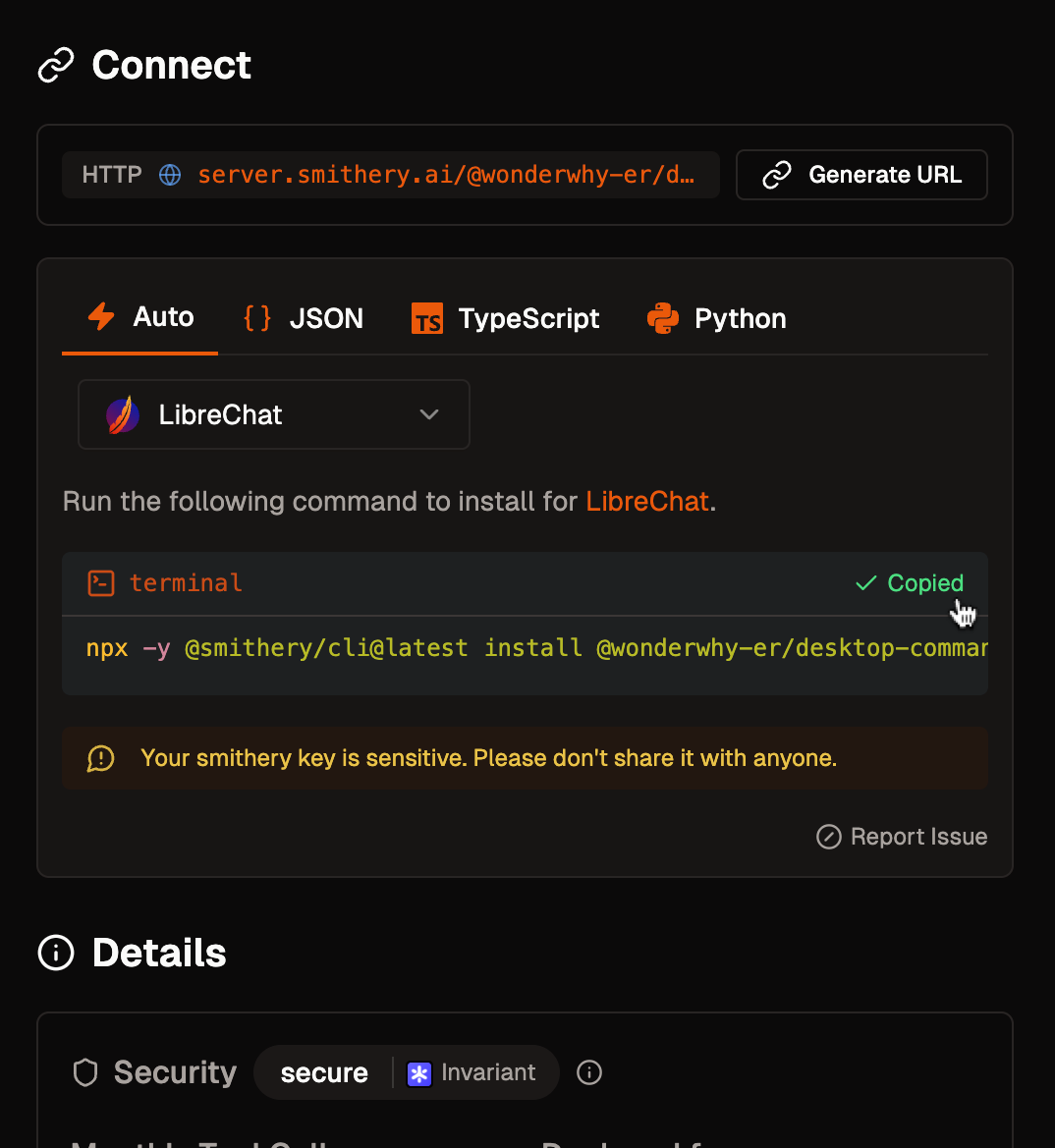

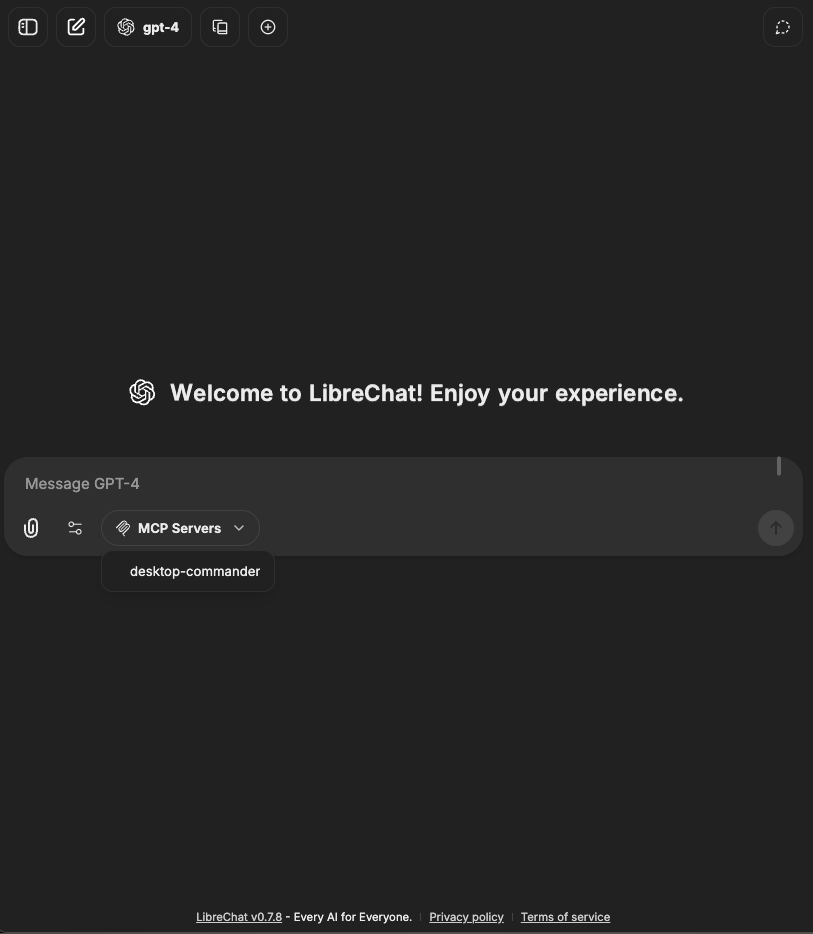

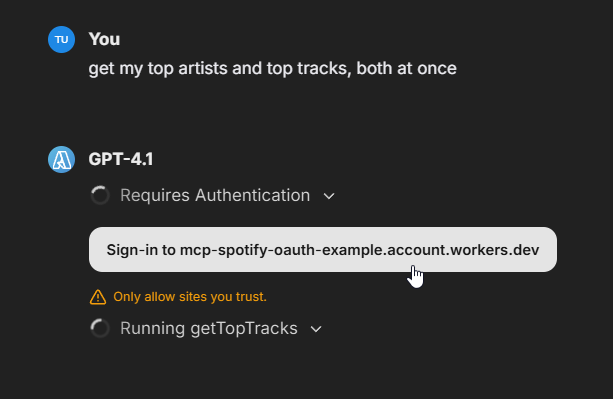

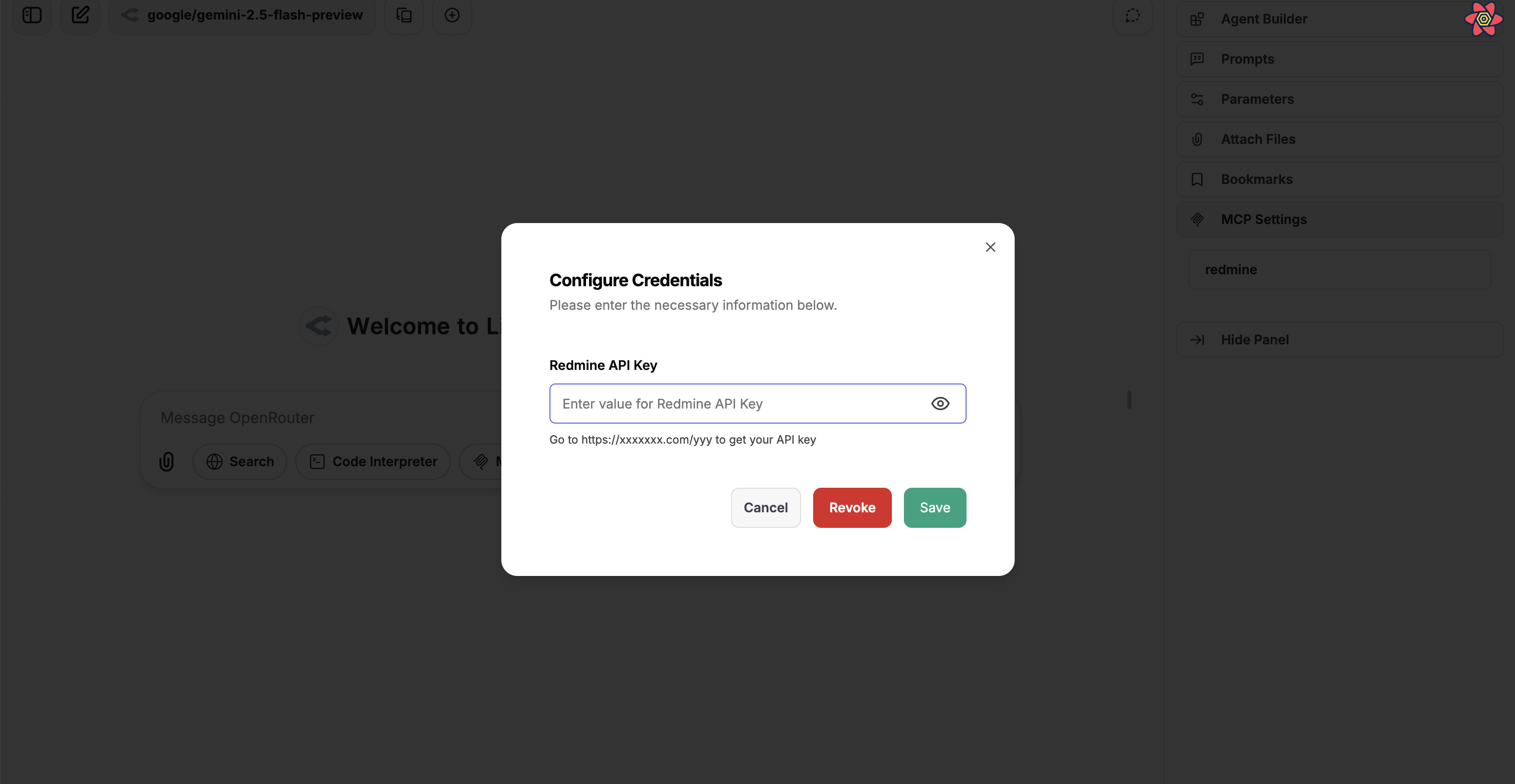

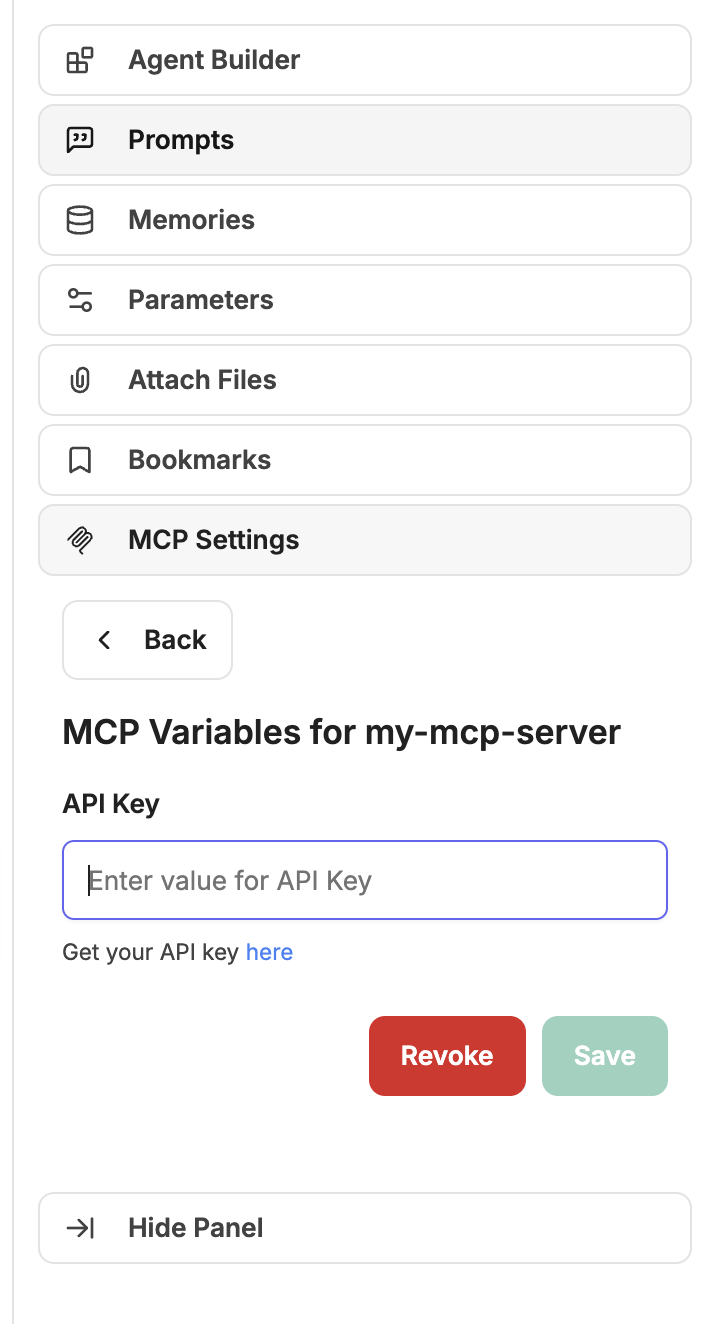

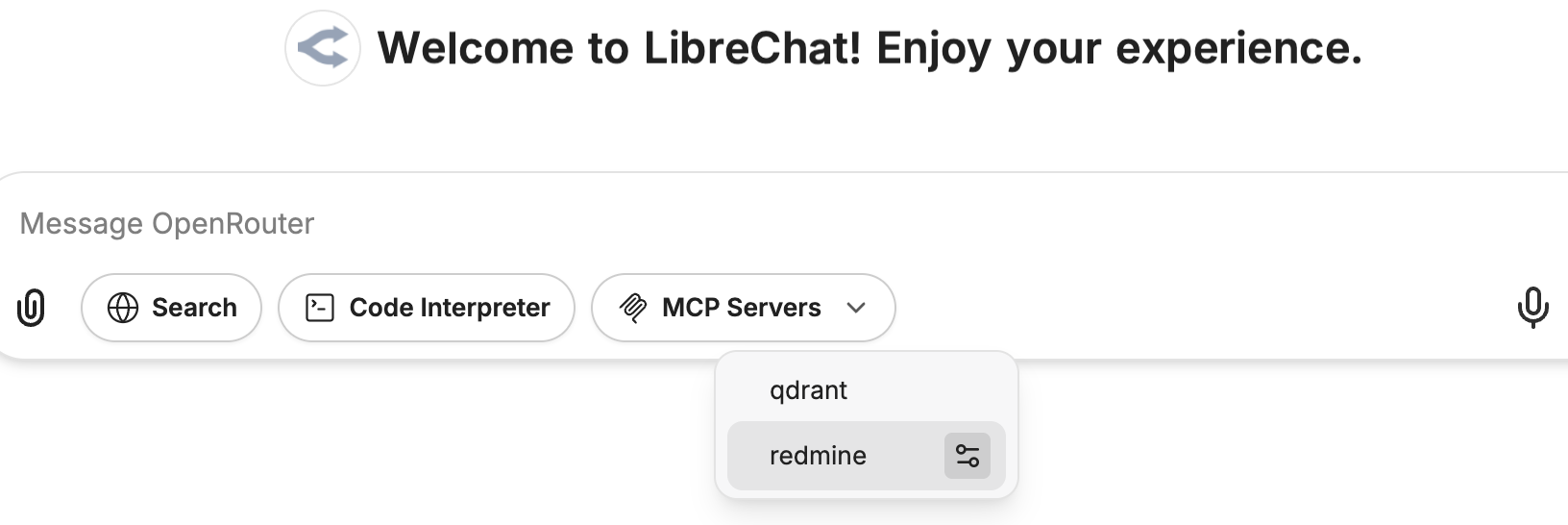

### Model Context Protocol (MCP)

MCP is an open protocol that standardizes how applications provide context to Large Language Models (LLMs), acting like a universal adapter for AI tools and data sources.

For more information, see documentation on [MCP](/docs/features/mcp).

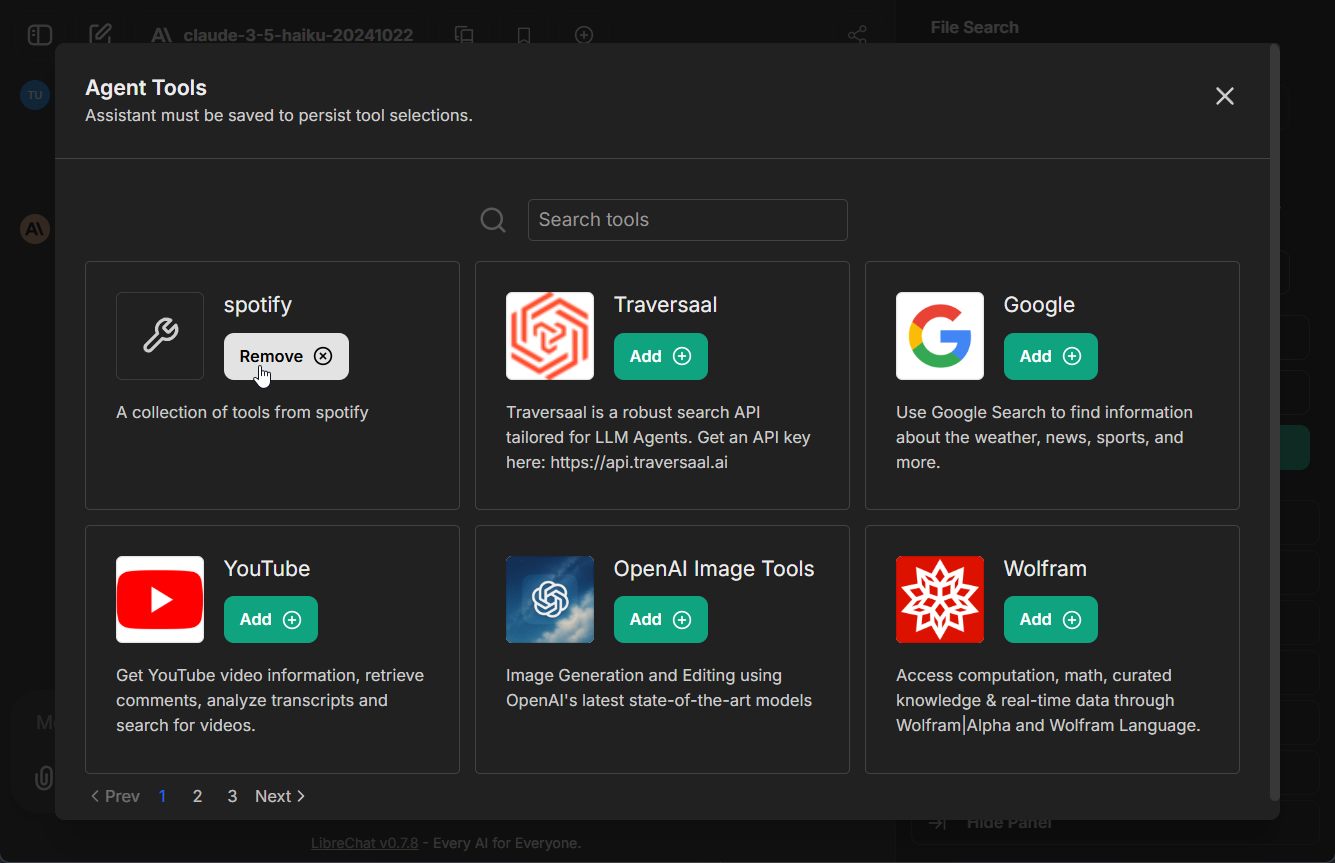

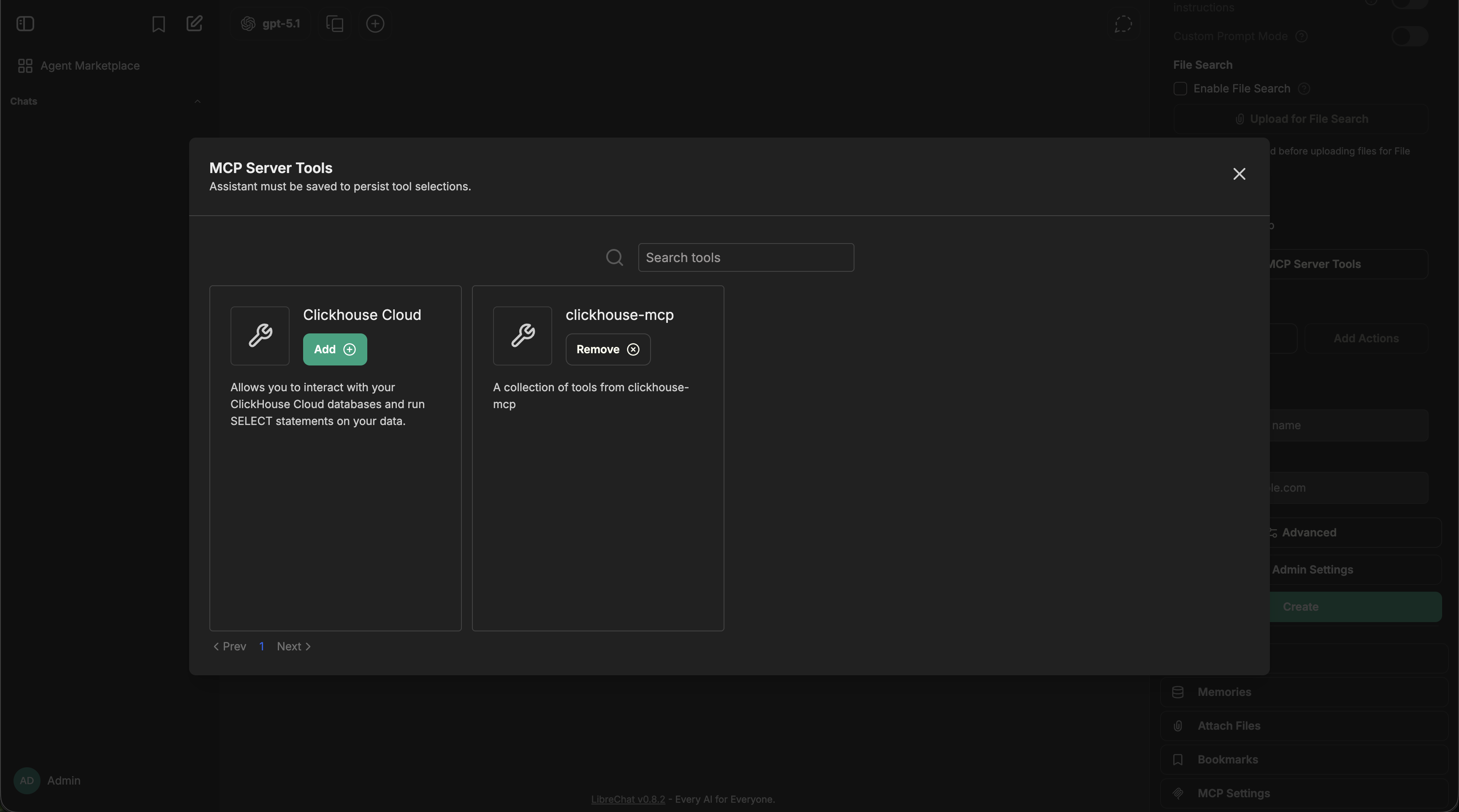

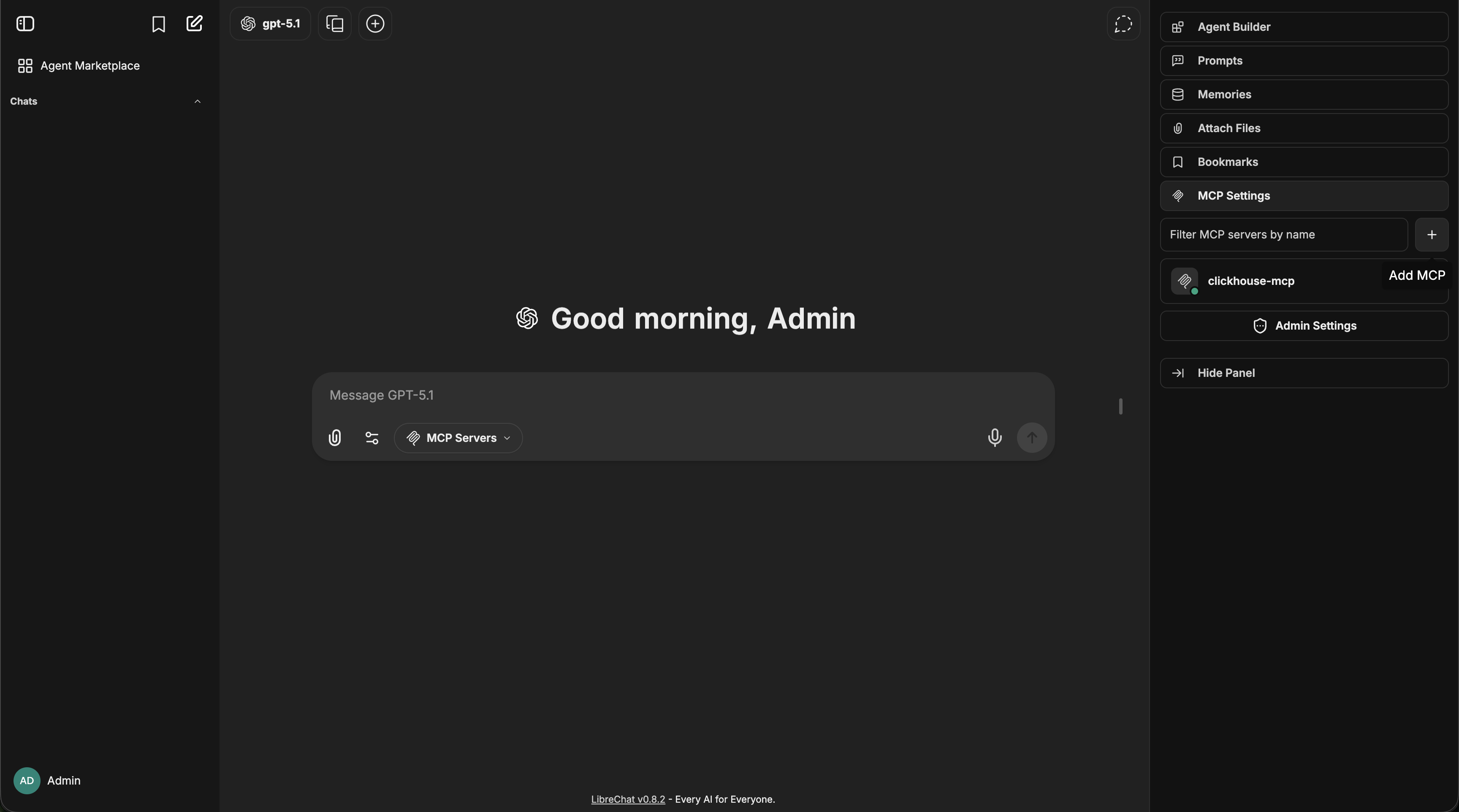

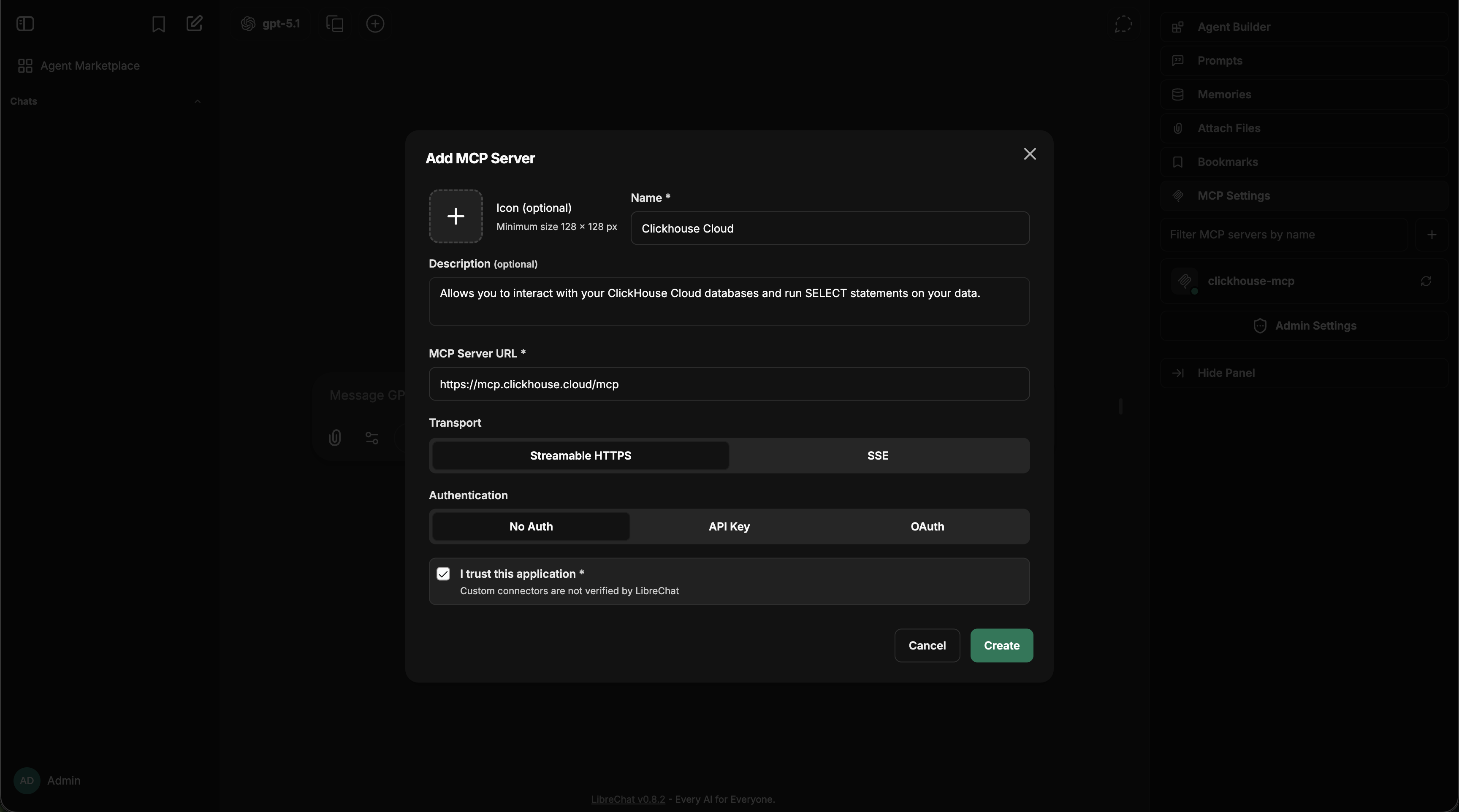

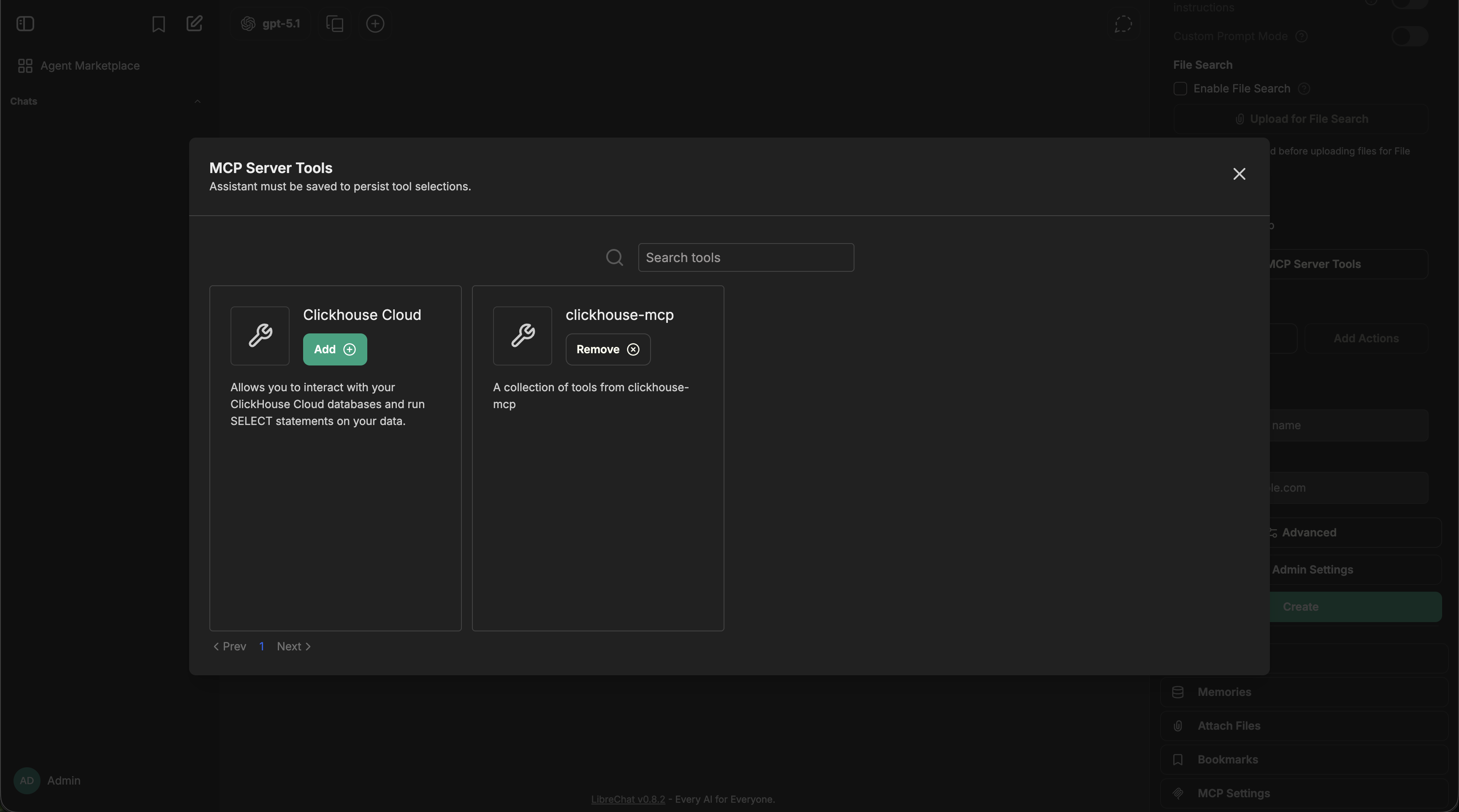

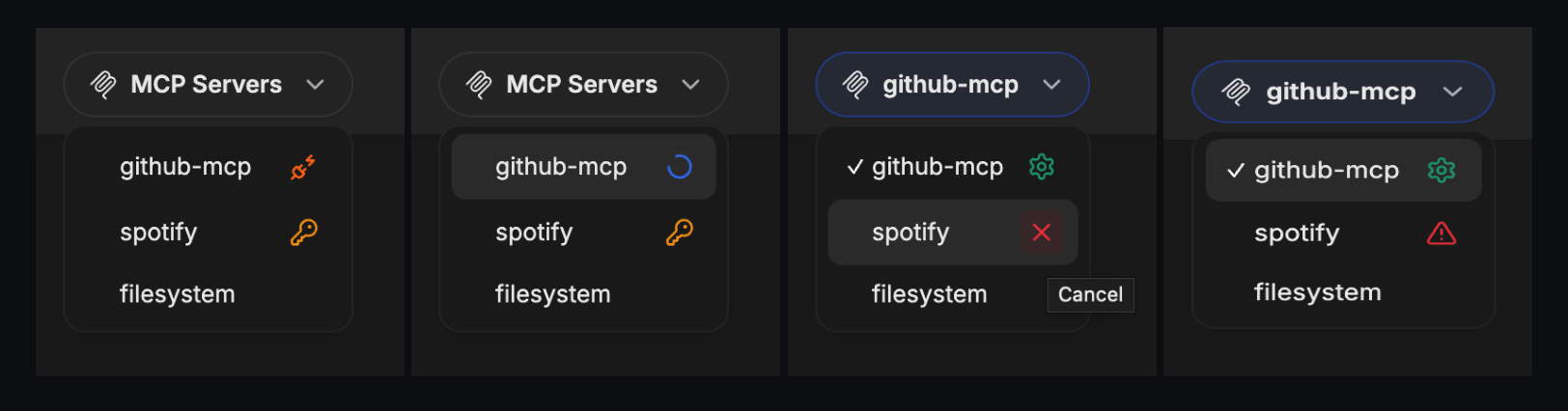

#### Agents with MCP Tools

1. Configure MCP servers in your `librechat.yaml` file

2. Restart LibreChat to initialize the connections

3. Create or edit an agent

4. Click the "Add Tools" button in the Agent Builder panel to open the Tools Dialog shown below

5. Select the MCP server(s) you want to add - each server appears as a single entry

6. Save your changes to the agent

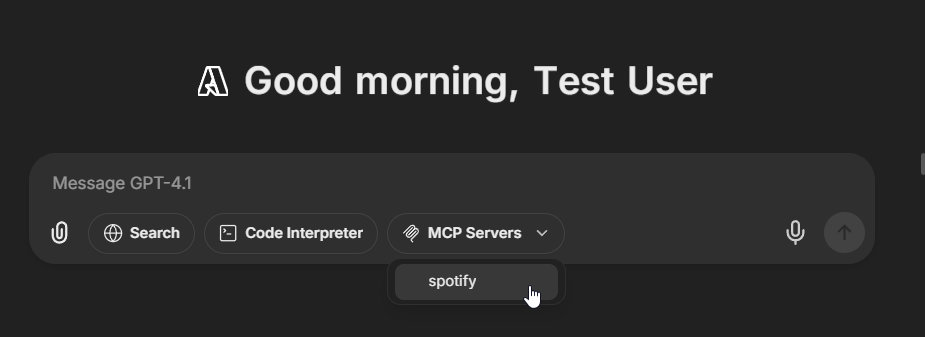

In this example, we've added the **[Spotify MCP server](https://github.com/LibreChat-AI/spotify-mcp)** to an agent.

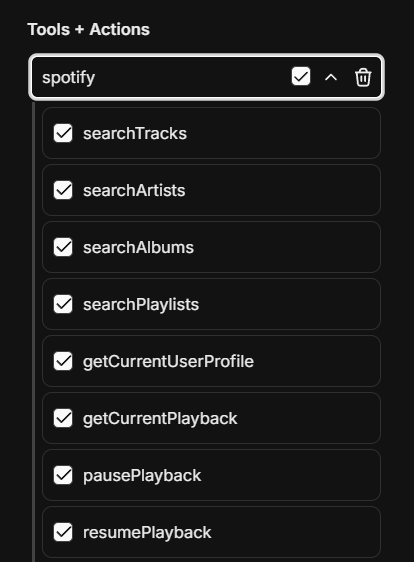

#### Managing MCP Tools

Once an MCP server is added to an agent, you can fine-tune which specific tools are available:

- After adding an MCP server, expand it to see all available tools

- Check/uncheck individual tools as needed

- For example, the Spotify MCP server provides ~20 tools (search, playback control, playlist management, etc.)

- This granular control lets you limit agents to only the tools they need

Learn More:

- [Configuring MCP Servers](/docs/features/mcp)

- [Model Context Protocol Introduction](https://modelcontextprotocol.io/introduction)

### Deferred Tools

Deferred tools allow agents to have access to many MCP tools without loading them all into the LLM context upfront. Instead, deferred tools are discoverable at runtime via a **Tool Search** mechanism.

This is especially useful when an agent has access to many MCP servers with dozens of tools—loading them all would consume a large portion of the context window and degrade response quality.

**How it works:**

- Tools marked as "deferred" are excluded from the initial LLM context

- A `ToolSearch` tool is automatically added, allowing the LLM to discover and load deferred tools on demand

- Once discovered, the tool is available for the rest of the conversation

**Configuring deferred tools:**

1. Open the Agent Builder and add MCP tools

2. Click the dropdown on any MCP tool

3. Toggle "Defer Loading" — deferred tools show a clock icon

**Note:** The `deferred_tools` capability is enabled by default. It can be toggled via the [`librechat.yaml` agents configuration](/docs/configuration/librechat_yaml/object_structure/agents#capabilities).

{/*

### Programmatic Tool Calling

**Note:** this feature is still in private beta and not accessible to all users.

Programmatic Tool Calling (PTC) allows MCP tools to be executed via code in a sandbox environment rather than being called directly by the LLM. This enables more complex tool orchestration—for example, calling multiple tools in sequence, processing results with code, or building dynamic workflows.

**How it works:**

- Tools marked for "code execution" calling are routed through the Code Interpreter sandbox

- The LLM writes code that calls the tools programmatically, allowing for loops, conditionals, and data transformations

- Results flow back through the sandbox to the LLM

**Configuring programmatic tools:**

1. Open the Agent Builder and add MCP tools

2. Click the dropdown on any MCP tool

3. Toggle "Code Execution" calling — programmatic tools show a code icon

**Note:** The `programmatic_tools` capability is **disabled by default** and requires the latest [Code Interpreter API](/docs/features/code_interpreter). It can be enabled via the [`librechat.yaml` agents configuration](/docs/configuration/librechat_yaml/object_structure/agents#capabilities).

*/}

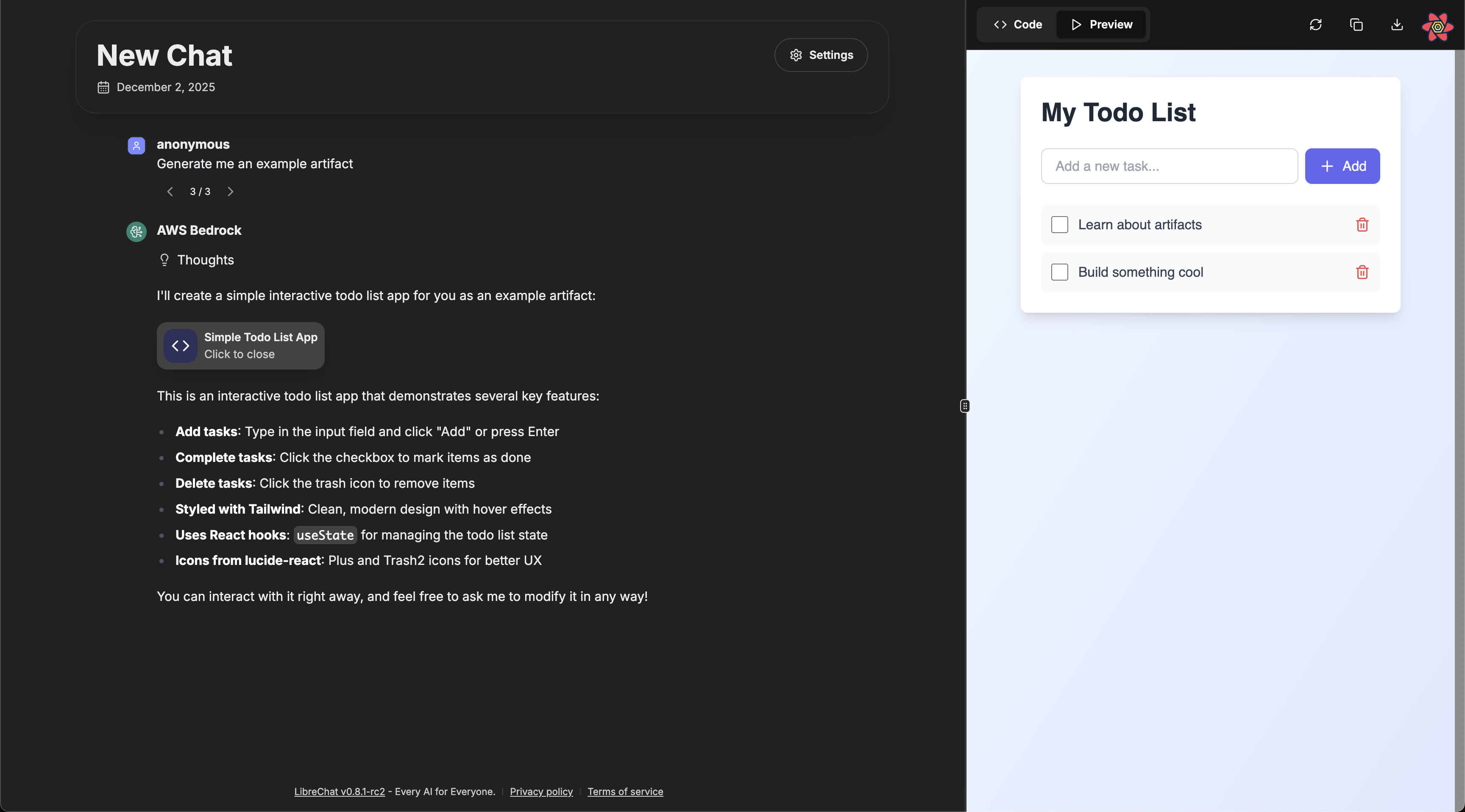

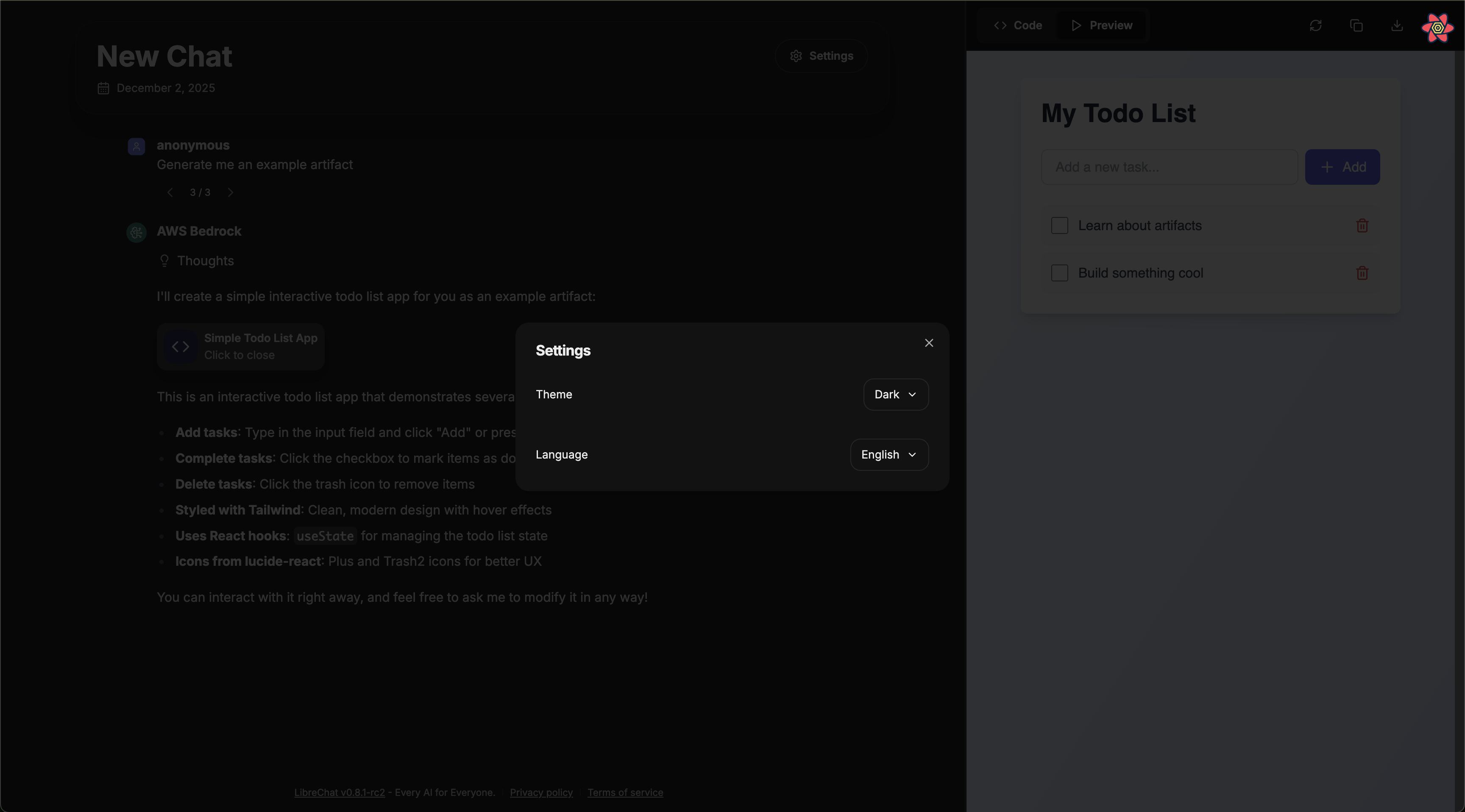

### Artifacts

The Artifacts capability enables your agent to generate and display interactive content:

- Create React components, HTML code, and Mermaid diagrams

- Display content in a separate UI window for clarity and interaction

- Configure artifact-specific instructions at the agent level

- [More info about Artifacts](/docs/features/artifacts)

When enabled, additional instructions specific to the use of artifacts are added by default. Options include:

- **Enable shadcn/ui instructions**: Adds instructions for using shadcn/ui components (a collection of re-usable components built using Radix UI and Tailwind CSS)

- **Custom Prompt Mode**: When enabled, the default artifacts system prompt will not be included, allowing you to provide your own custom instructions

Configuring artifacts at the agent level is the preferred approach, as it allows for more granular control compared to the legacy app-wide configuration.

If you enable Custom Prompt Mode, you should include at minimum the basic artifact format in your instructions.

Here's a simple example of the minimum instructions needed:

````md

When creating content that should be displayed as an artifact, use the following format:

:::artifact{identifier="unique-identifier" type="mime-type" title="Artifact Title"}

```

Your artifact content here

```

:::

For the type attribute, use one of:

- "text/html" for HTML content

- "application/vnd.mermaid" for Mermaid diagrams

- "application/vnd.react" for React components

- "image/svg+xml" for SVG images

````

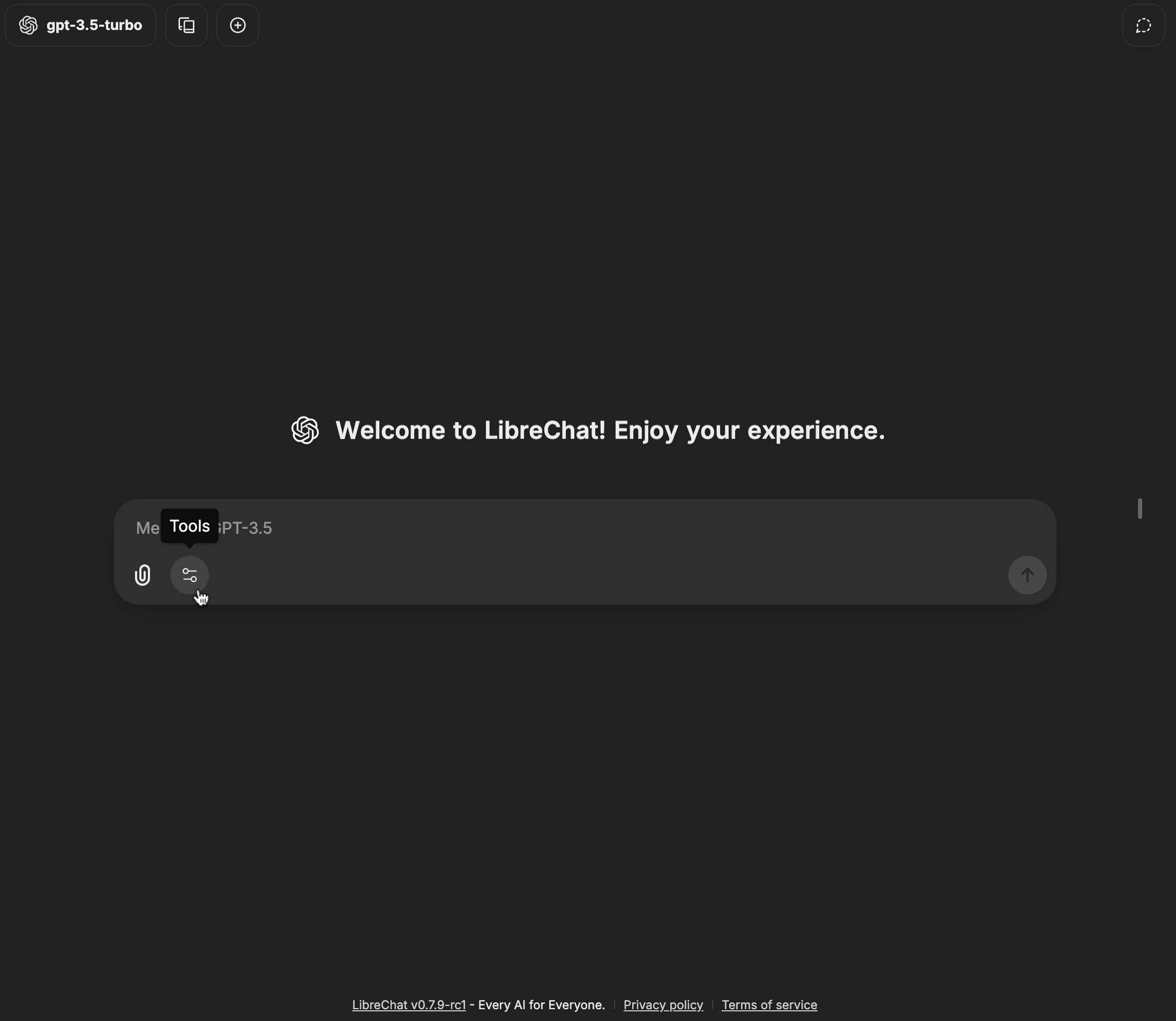

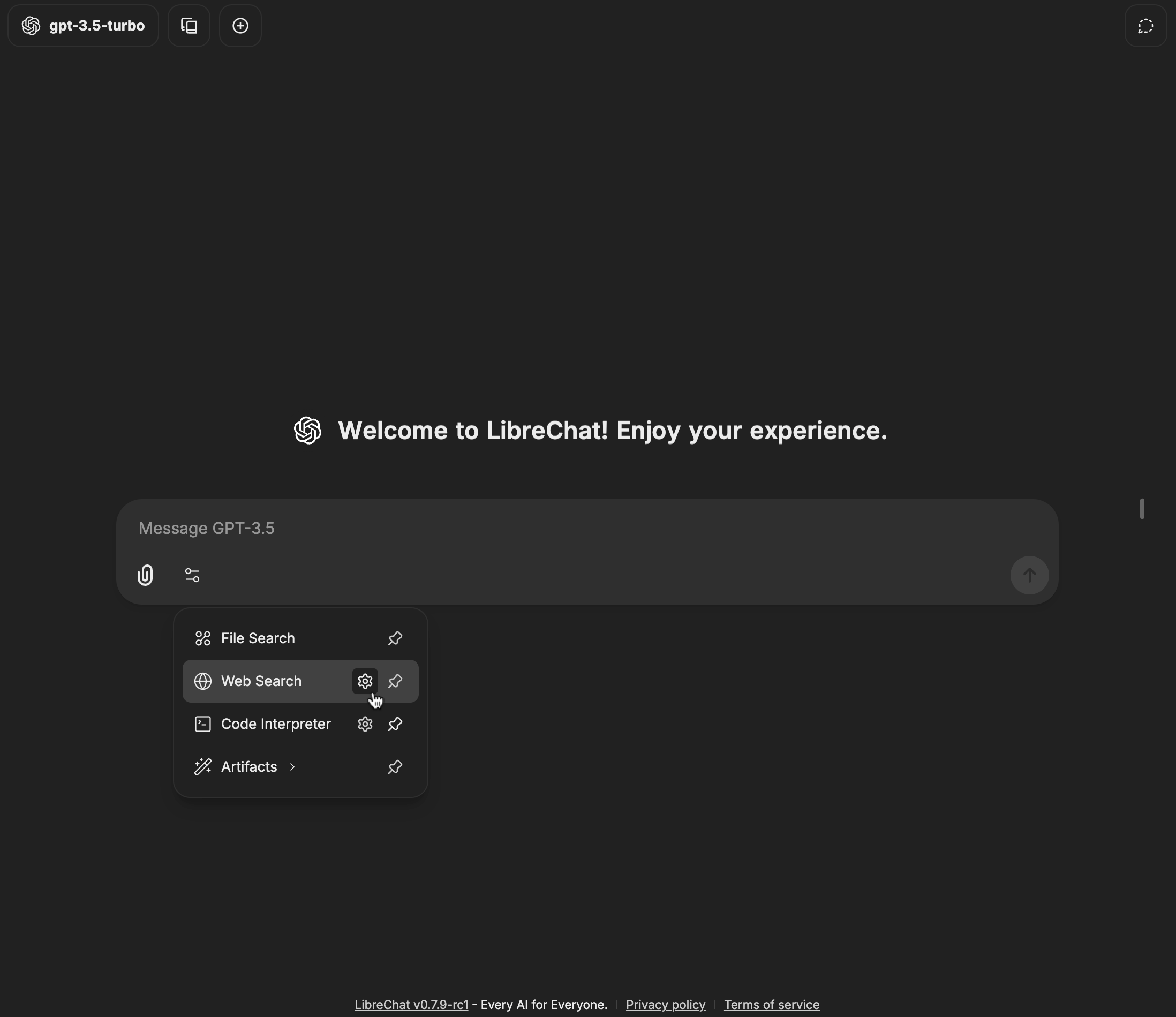

### Tools

Agents can also be enhanced with various built-in tools:

- **[OpenAI Image Tools](/docs/features/image_gen#1--openai-image-tools-recommended)**: Image generation & editing using **[GPT-Image-1](https://platform.openai.com/docs/models/gpt-image-1)**

- **[DALL-E-3](/docs/features/image_gen#2--dalle-legacy)**: Image generation from text descriptions

- **[Stable Diffusion](/docs/features/image_gen#3--stable-diffusion-local)** / **[Flux](/docs/features/image_gen#4--flux)**: Text-to-image generation

- **[Wolfram](/docs/configuration/tools/wolfram)**: Computational and mathematical capabilities

- **[OpenWeather](/docs/configuration/tools/openweather)**: Weather data retrieval

- **[Google Search](/docs/configuration/tools/google_search)**: Access to web search functionality

- **Calculator**: Mathematical calculations

- **Tavily Search**: Advanced search API with diverse data source integration

- **Azure AI Search**: Information retrieval

- **Traversaal**: A robust search API for LLM Agents

- Tools can be disabled using the [`librechat.yaml`](/docs/configuration/librechat_yaml) configuration file:

- [More info](/docs/configuration/librechat_yaml/object_structure/agents#capabilities)

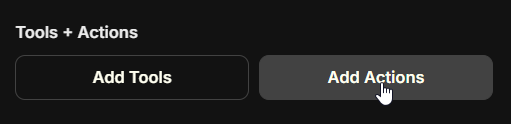

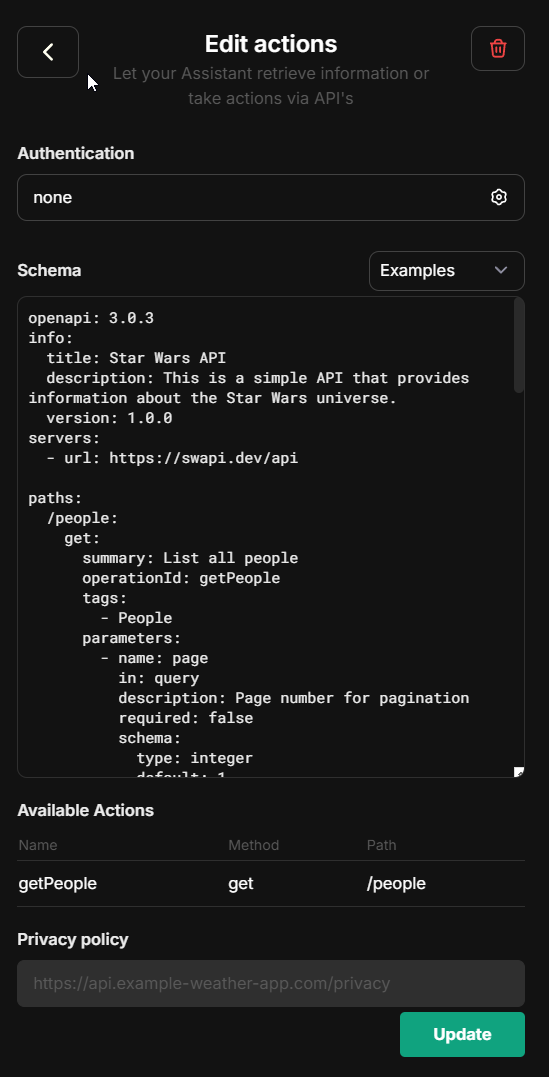

### Actions

With the Actions capability, you can dynamically create tools from [OpenAPI specs](https://swagger.io/specification/) to add to your Agents.

**Clicking the button above will open a form where you can input the OpenAPI spec URL and create an action:**

- Actions can be disabled using the [`librechat.yaml`](/docs/configuration/librechat_yaml) configuration file:

- [More info](/docs/configuration/librechat_yaml/object_structure/agents#capabilities)

- Individual domains can be whitelisted for agent actions:

- [More info](/docs/configuration/librechat_yaml/object_structure/actions#alloweddomains)

- Note that you can add add the 'x-strict': true flag at operation-level in the OpenAPI spec for actions.

If using an OpenAI model supporting it, this will automatically generate function calls with 'strict' mode enabled.

- Strict mode supports only a partial subset of json. Read https://platform.openai.com/docs/guides/structured-outputs/some-type-specific-keywords-are-not-yet-supported for details.

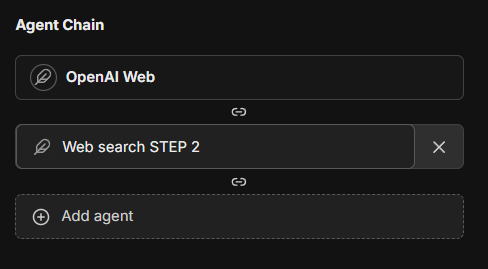

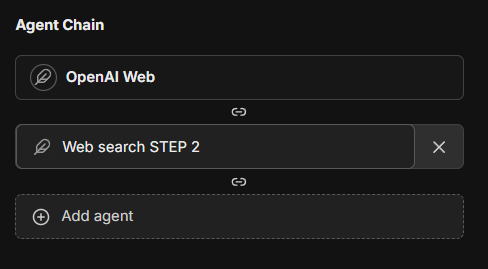

### Agent Chain

The Agent Chain capability enables a Mixture-of-Agents (MoA) approach, allowing you to create a sequence of agents that work together:

- Create chains of specialized agents for complex tasks

- Each agent in the chain can access outputs from previous agents

- Configure the maximum number of steps for the agent chain

- **Note:** Access this feature from the Advanced Settings panel in the Agent Builder

- **Note:** This feature is currently in beta and may be subject to change

- The current maximum of agents that can be chained is 10, but this may be configurable in the future

This feature introduces a layered Mixture-of-Agents architecture to LibreChat, where each agent takes all the outputs from agents in the previous layer as auxiliary information in generating its response, as described in [the eponymous "Mixture-of-Agents" paper](https://arxiv.org/abs/2406.04692).

### Advanced Settings

Advanced settings for your agent (found in the Advanced view of the Agent form) outside of "capabilities."

#### Max Agent Steps

This setting allows you to limit the number of steps an agent can take in a "run," which refers to the agent loop before a final response is given.

If left unconfigured, the default is 25 steps, but you can adjust this to suit your needs. For admins, you can set a global default as well as a global maximum in the [`librechat.yaml`](/docs/configuration/librechat_yaml/object_structure/agents#recursionlimit) file.

A "step" refers to either an AI API request or a round of tool usage (1 or many tools, depending on how many tool calls the LLM provides from a single request).

A single, non-tool response is 1 step. A singular round of tool usage is usually 3 steps:

1. API Request -> 2. Tool Usage (1 or many tools) -> 3. Follow-up API Request

## File Management

Agents support multiple ways to work with files:

### In Chat Interface

When chatting with an agent, you have four upload options:

1. **Upload Images**

- Uploads images for native vision model support

- Sends images directly to the model provider

2. **Upload as Text** (requires `context` capability)

- Extracts and includes full document content in conversation

- Uses text parsing by default; enhanced by OCR if configured

- Content exists only in current conversation

- See [Upload as Text](/docs/features/upload_as_text)

3. **Upload for File Search** (requires `file_search` capability, toggled ON)

- Uses semantic search (RAG) with vector stores

- Returns relevant chunks via tool use

- Optimal for large documents/multiple files

- Sub-optimal for structured data (CSV, Excel, JSON, etc.)

4. **Upload for Code Interpreter** (requires `execute_code` capability, toggled ON)

- Adds files to code interpreter environment

- Optimal for structured data (CSV, Excel, JSON, etc.)

- More info about [Code Interpreter](/docs/features/code_interpreter)

### In Agent Builder

When configuring an agent, you can attach files in different categories:

1. **Image Upload**: For visual content the agent can reference

2. **File Search Upload**: Documents for RAG capabilities

3. **Code Interpreter Upload**: Files for code processing

4. **File Context**: Documents with extracted text to supplement agent instructions

**File Context** uses the `context` capability and works just like ["Upload as Text"](/docs/features/upload_as_text) - it uses text parsing by default and is enhanced by OCR when configured. Text is extracted at upload time and stored in the agent's instructions. This is ideal for giving agents persistent knowledge from documents, PDFs, code files, or images with text.

**Processing priority:** OCR > STT > text parsing (same as Upload as Text)

**Note:** The extracted text is included as part of the agent's system instructions.

## Sharing and Permissions

### Administrator Controls

Administrators have access to global permission settings within the agent builder UI:

- Enable/disable agent sharing across all users

- Control agent usage permissions

- Manage agent creation rights

- Configure platform-wide settings

The first account created for your instance is an administrator. If you need to add an additional administrator, you may [access MongoDB](/docs/configuration/mongodb/mongodb_auth) and update the user's profile:

```

db.users.updateOne(

{ email: 'USER_EMAIL_ADDRESS' },

{ $set: { role: 'ADMIN' } }

)

```

The use of agents for all users can also be disabled via config, [more info](/docs/configuration/librechat_yaml/object_structure/interface).

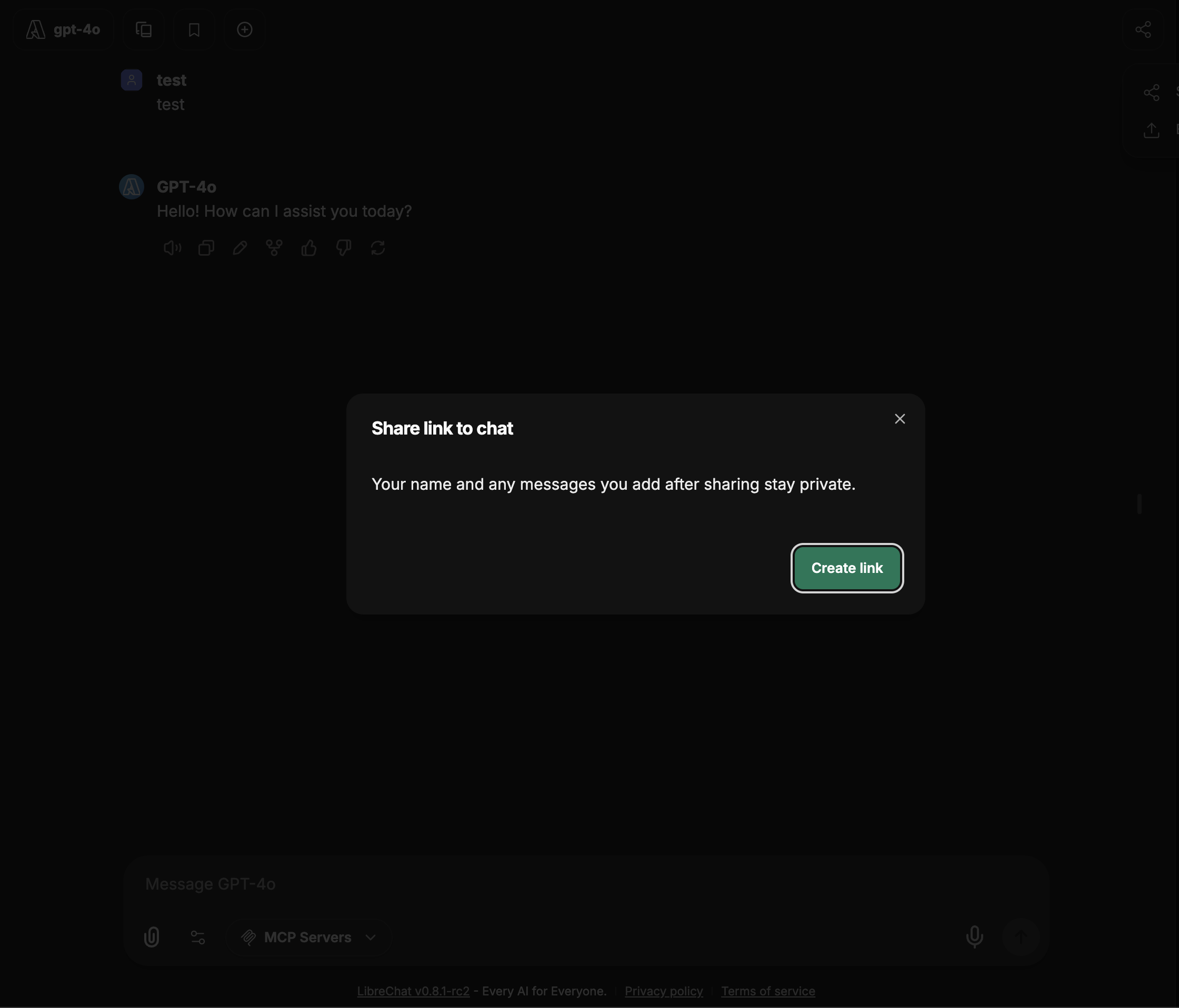

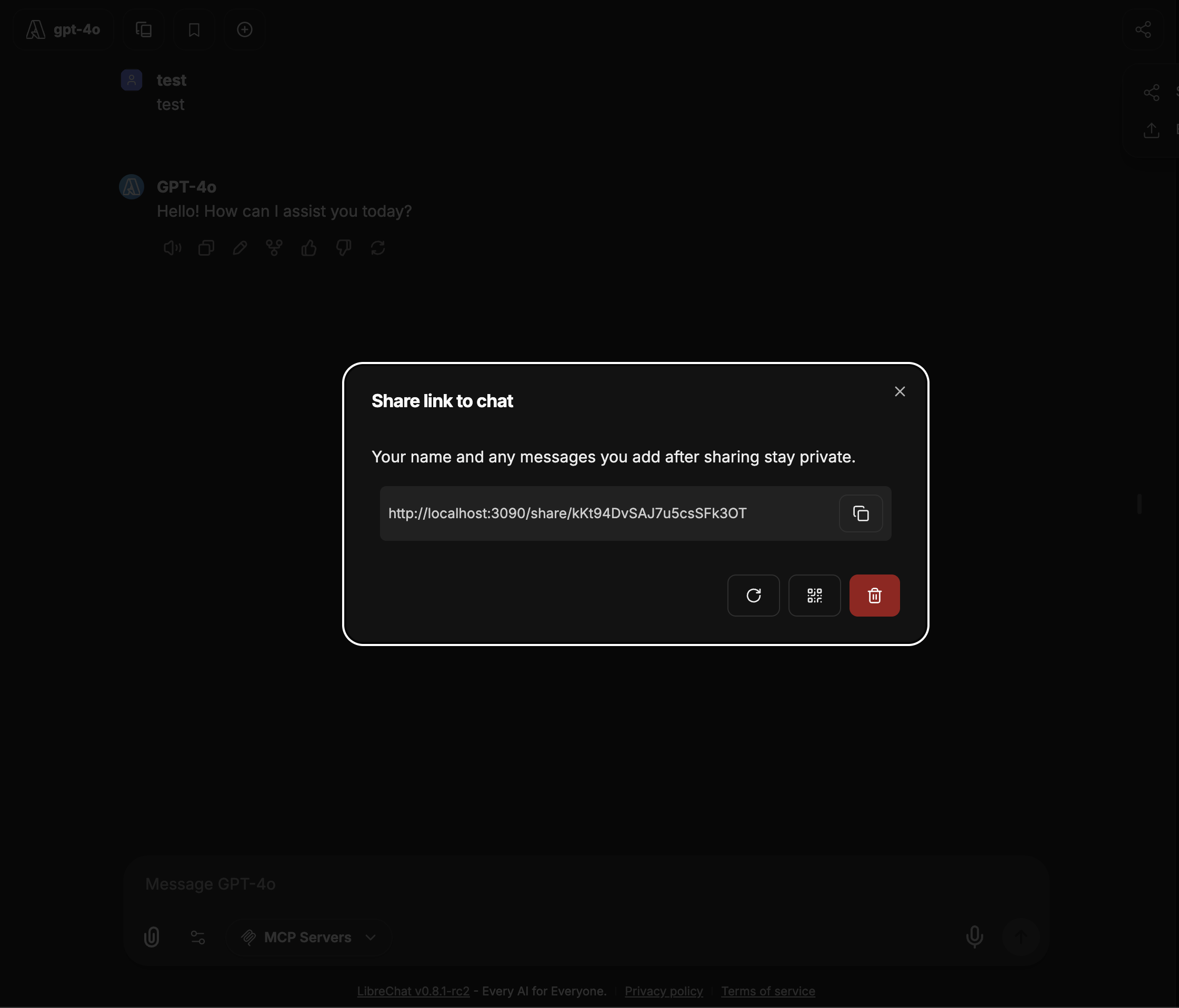

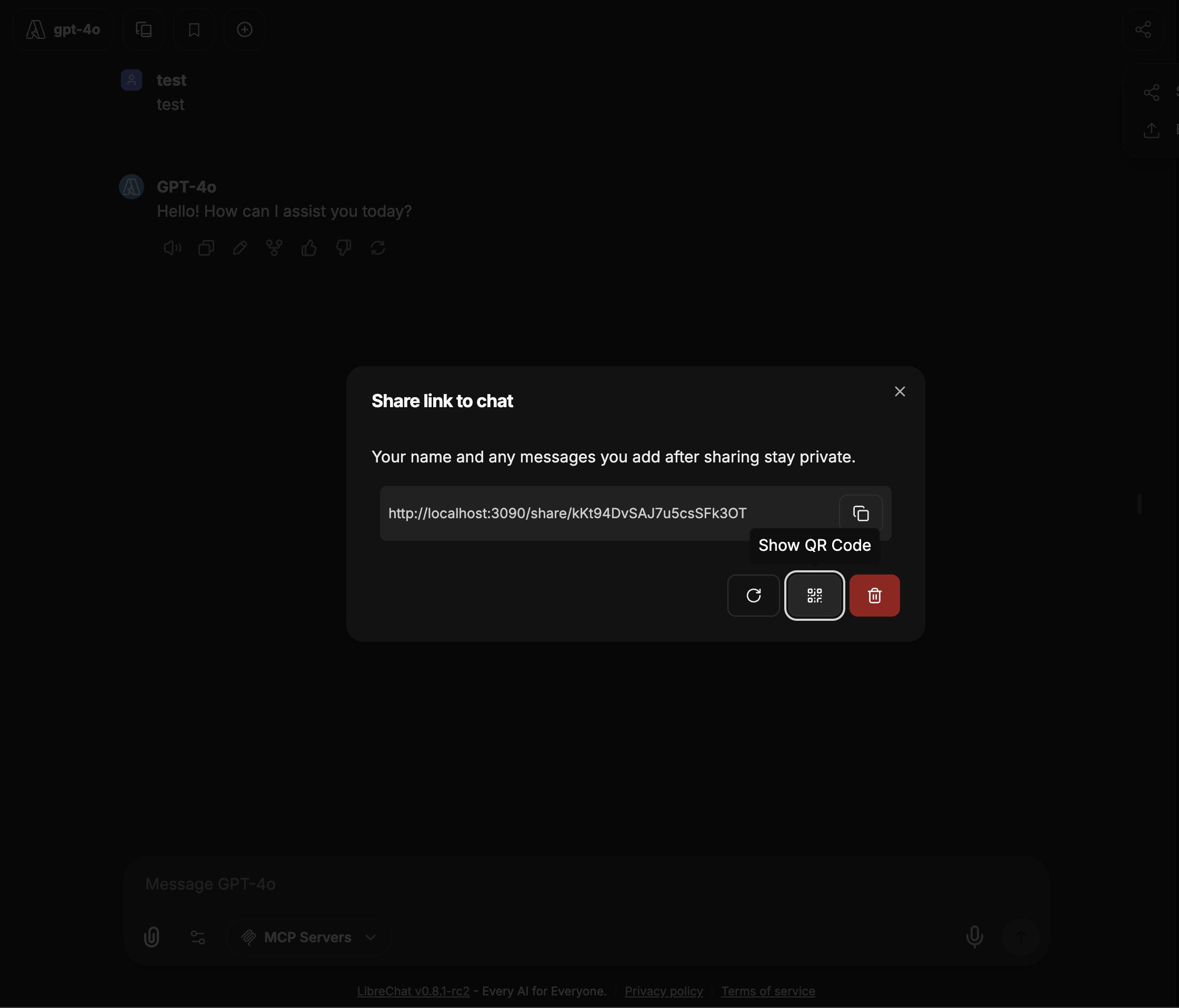

### User-Level Sharing

Individual users can:

- Share their agents with all users (if enabled)

- Control editing permissions for shared agents

- Manage access to their created agents

### Notes

- Instructions, model parameters, attached files, and tools are only exposed to the user if they have editing permissions

- An agent may leak any attached data, whether instructions or files, through conversation--make sure your instructions are robust against this

- Only original authors and administrators can delete shared agents

- Agents are private to authors unless shared

## Optional Configuration

LibreChat allows admins to configure the use of agents via the [`librechat.yaml`](/docs/configuration/librechat_yaml) file:

- Disable Agents for all users (including admins): [more info](/docs/configuration/librechat_yaml/object_structure/interface)

- Customize agent capabilities using: [more info](/docs/configuration/librechat_yaml/object_structure/agents)

## Best Practices

- Provide clear, specific instructions for your agent

- Carefully consider which tools are necessary for your use case

- Organize files appropriately across the four upload categories

- Review permission settings before sharing agents

- Test your agent thoroughly before deploying to other users

## Recap

1. Select "Agents" from the endpoint dropdown menu

2. Open the Agent Builder panel

3. Fill out the required agent details

4. Configure desired capabilities (Code Interpreter, File Search, File Context, etc.)

5. Add necessary tools and files

6. Set sharing permissions if desired

7. Create and start using your agent

When chatting with agents, you can:

- Use "Upload as Text" to include full document content in conversations (text parsing by default, enhanced by OCR)

- Use "Upload for File Search" for semantic search over documents (requires RAG API)

- Add files to agent's "File Context" to included a file's full content as part of the agent's system instructions

## Migration Required (v0.8.0-rc3+)

Starting from version v0.8.0-rc3, LibreChat uses a new Access Control List (ACL) based permission system for agents. If you're upgrading from an earlier version, you must run the agent permissions migration for existing agents to remain accessible.

### What the Migration Does

The agent permissions migration transitions your agents from a simple ownership model to a sophisticated ACL-based system with multiple permission levels:

- **OWNER**: Full control over the agent

- **EDITOR**: Can view and modify the agent

- **VIEWER**: Read-only access to the agent

Without running this migration, existing agents will be inaccessible through the new permission-aware API endpoints.

### Running the Migration

Choose the appropriate command based on your deployment method:

#### 1. For the default `docker-compose.yml` (if you use `docker compose up` to start the app):

**Preview changes (dry run):**

```bash

docker compose exec api npm run migrate:agent-permissions:dry-run

```

**Execute migration:**

```bash

docker compose exec api npm run migrate:agent-permissions

```

**Custom batch size (for large datasets):**

```bash

docker compose exec api npm run migrate:agent-permissions:batch

```

#### 2. For the `deploy-compose.yml` (if you followed the [Ubuntu Docker Guide](/docs/remote/docker_linux)):

**Preview changes (dry run):**

```bash

docker exec -it LibreChat-API /bin/sh -c "cd .. && npm run migrate:agent-permissions:dry-run"

```

**Execute migration:**

```bash

docker exec -it LibreChat-API /bin/sh -c "cd .. && npm run migrate:agent-permissions"

```

**Custom batch size (for large datasets):**

```bash

docker exec -it LibreChat-API /bin/sh -c "cd .. && npm run migrate:agent-permissions:batch"

```

#### 3. For local development (from project root):

**Preview changes (dry run):**

```bash

npm run migrate:agent-permissions:dry-run

```

**Execute migration:**

```bash

npm run migrate:agent-permissions

```

**Custom batch size (for large datasets):**

```bash

npm run migrate:agent-permissions:batch

```

### What Happens During Migration

- **Private Agents**: Remain accessible only to their creators (receive OWNER permission)

- **Shared Agents**: If an agent was previously shared, it will receive appropriate ACL entries as a Public Agent (shared to all users)

- **System Detection**: LibreChat automatically detects unmigrated agents at startup and displays a warning

You can adjust the resulting agent permissions via the Agent Builder UI.

The same migration process applies to prompts. If you also have existing prompts, run the prompt permissions migration using the same commands but replace `agent` with `prompt` in the command names.

## Agents API (Beta)

LibreChat agents can also be accessed programmatically via API, enabling external applications and scripts to interact with your agents using OpenAI-compatible SDKs or the Open Responses format.

See the [Agents API documentation](/docs/features/agents_api) for setup and usage details.

## What's next?

LibreChat Agents usher in a new era for the app where future pipelines can be streamlined via Agents for specific tasks and workflows across your experience in LibreChat.

Future updates will include:

- General improvements to the current Agent experience

- Multi-agent orchestration for complex workflows

- Ability to customize agents for various functions: titling (chat thread naming), memory management (user context/history), and prompt enhancement (input assistance/predictions)

- More tools, configurable tool parameters, dynamic tool creation.

Furthermore, the update introduces a new paradigm for LibreChat, as its underlying architecture provides a much needed refresh for the app, optimizing both the user experience and overall app performance.

To highlight one notable optimization, an AI generation of roughly 1000 tokens will transfer about 1 MB of data using traditional endpoints (at the time of writing, any endpoint option besides Agents and AWS Bedrock).

Using an agent, the same generation will transfer about about 52 kb of data, a 95% reduction in data transfer, which is that much less of a load on the server and the user's device.

---

AI Agents in LibreChat provide a powerful way to create specialized assistants without coding knowledge while maintaining the flexibility to work with your preferred AI models and providers.

---

#LibreChat #AIAssistants #NoCode #OpenSource

# Agents API (Beta) (/docs/features/agents_api)

The Agents API is currently in beta. Endpoints, request/response formats, and behavior may change as we iterate toward a stable release.

LibreChat exposes your agents through two API-compatible interfaces, allowing external applications, scripts, and services to interact with your agents programmatically.

## Overview

The Agents API provides two interfaces:

- **OpenAI-compatible Chat Completions** — `POST /api/agents/v1/chat/completions`

- **Open Responses API** — `POST /api/agents/v1/responses`

Both are authenticated via API keys and support streaming responses, making it easy to integrate LibreChat agents into existing workflows that already use OpenAI SDKs or similar tooling.

LibreChat is adopting [Open Responses](https://www.openresponses.org/) as its primary API framework for serving agents. While the Chat Completions endpoint provides backward compatibility with existing OpenAI-compatible tooling, the Open Responses endpoint represents the future direction.

## Enabling the Agents API

The Agents API is gated behind the `remoteAgents` interface configuration. All permissions default to `false`.

```yaml filename="librechat.yaml"

interface:

remoteAgents:

use: true

create: true

```

See [Interface Configuration — remoteAgents](/docs/configuration/librechat_yaml/object_structure/interface#remoteagents) for all available options.

**Note:** Admin users have all remote agent permissions enabled by default.

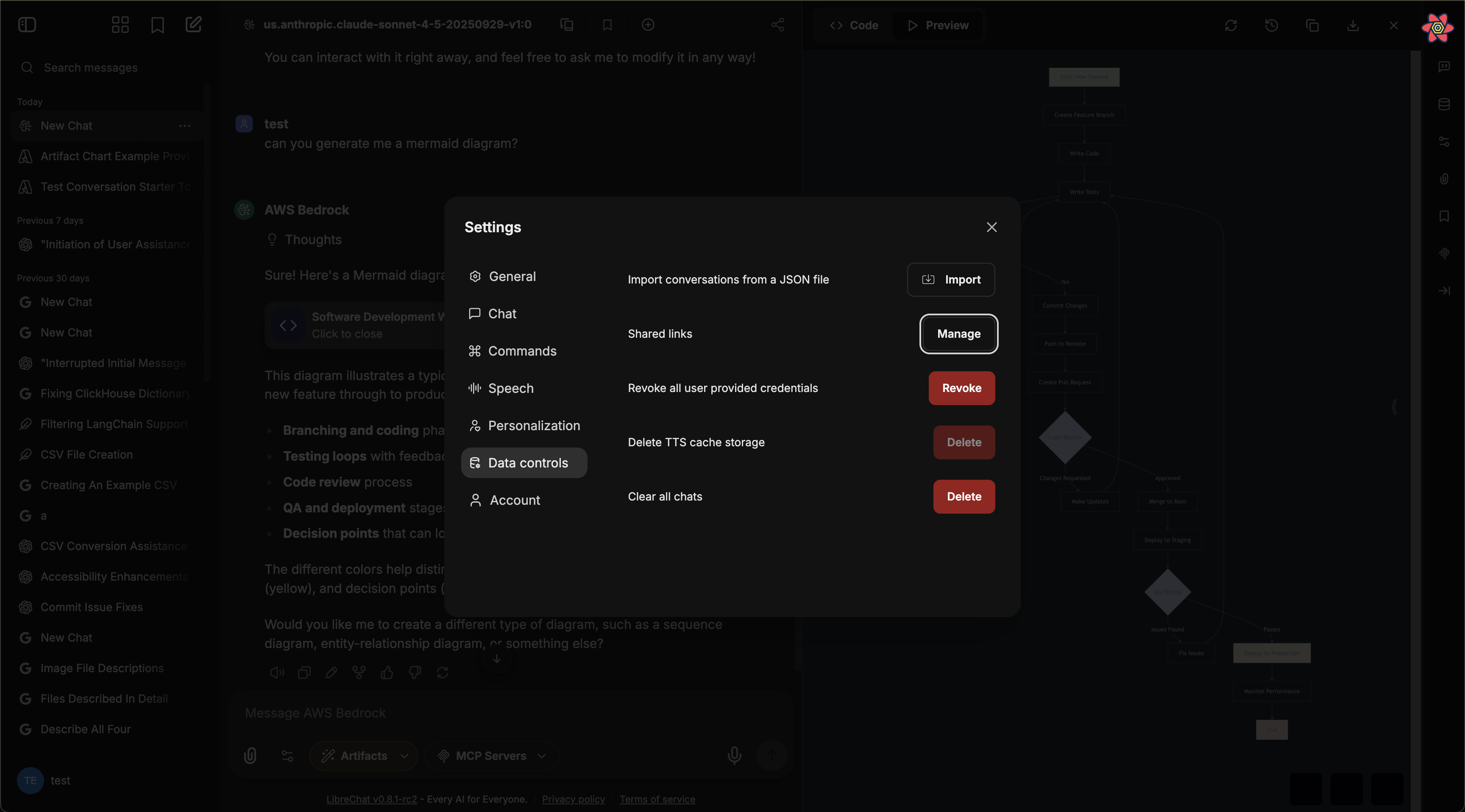

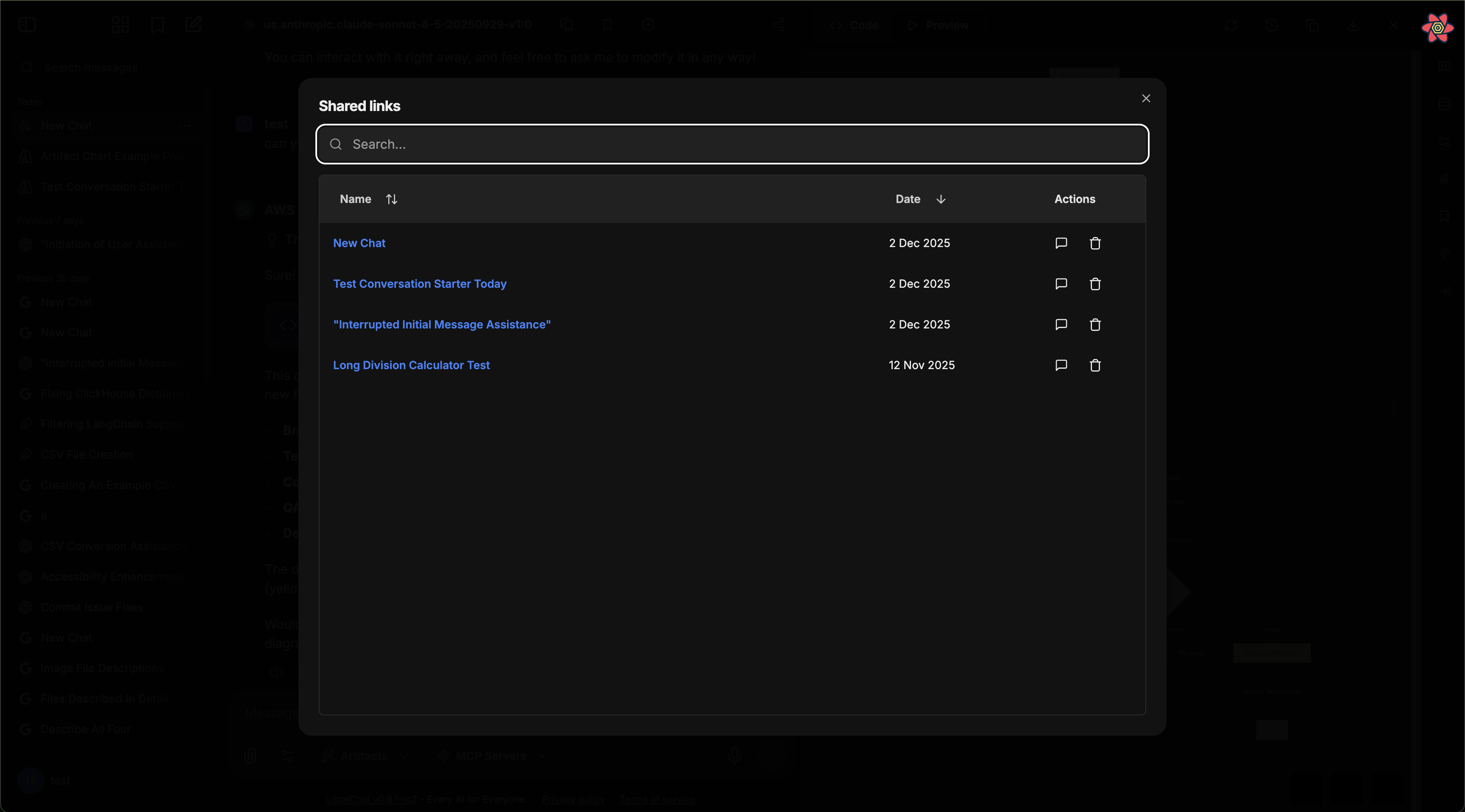

## API Key Management

Once `remoteAgents.use` and `remoteAgents.create` are enabled, users can generate API keys from the LibreChat UI. These keys authenticate requests to the Agents API.

## Endpoints

### Chat Completions (OpenAI-compatible)

```

POST /api/agents/v1/chat/completions

```

Use any OpenAI-compatible SDK by pointing it at your LibreChat instance. The `model` parameter corresponds to an agent ID.

**Example with curl:**

```bash

curl -X POST https://your-librechat-instance/api/agents/v1/chat/completions \

-H "Authorization: Bearer YOUR_API_KEY" \

-H "Content-Type: application/json" \

-d '{

"model": "agent_abc123",

"messages": [

{"role": "user", "content": "Hello, what can you help me with?"}

],

"stream": true

}'

```

**Example with OpenAI SDK (Python):**

```python

from openai import OpenAI

client = OpenAI(

base_url="https://your-librechat-instance/api/agents/v1",

api_key="YOUR_API_KEY"

)

response = client.chat.completions.create(

model="agent_abc123",

messages=[{"role": "user", "content": "Hello!"}],

stream=True

)

for chunk in response:

print(chunk.choices[0].delta.content, end="")

```

### List Models

```

GET /api/agents/v1/models

```

Returns available agents as models. Useful for discovering which agents are accessible with your API key.

### Open Responses API

```

POST /api/agents/v1/responses

```

The Open Responses endpoint follows the [Open Responses specification](https://www.openresponses.org/), an open inference standard initiated by OpenAI and built by the open-source AI community. It is designed for agentic workflows with native support for reasoning, tool use, structured outputs, and streaming semantic events.

```bash

curl -X POST https://your-librechat-instance/api/agents/v1/responses \

-H "Authorization: Bearer YOUR_API_KEY" \

-H "Content-Type: application/json" \

-d '{

"model": "agent_abc123",

"input": "What is the weather today?"

}'

```

## Token Usage Tracking

All Agents API requests track token usage against the user's balance (when token spending is configured). Both input and output tokens are counted, including cache tokens for providers that support them (OpenAI, Anthropic).

## Roadmap

- **Open Responses as primary interface** — We plan to expand the Open Responses endpoint with full support for agentic loops, tool orchestration, and streaming semantic events.

- **Anthropic Messages API** — We may add support for the Anthropic Messages API format as an additional interface in the future.

## Related Documentation

- [Agents](/docs/features/agents) — Creating and configuring agents

- [Interface Configuration — remoteAgents](/docs/configuration/librechat_yaml/object_structure/interface#remoteagents) — Access control settings

- [Token Usage](/docs/configuration/token_usage) — Configuring token spending and balance

- [Open Responses Specification](https://www.openresponses.org/) — The open inference standard

# Artifacts - Generative UI (/docs/features/artifacts)

- **Generative UI:** Create React components, HTML code, and Mermaid diagrams

- **Flexible Integration:** Use any model you have set up

- **Iterative Design:** Rapidly improve and refine generated outputs

- **Agent Integration:** Configure artifacts at the agent level (recommended approach)

> **Note:** The preferred way to use artifacts is now through the [Agents feature](/docs/features/agents#artifacts), which allows for more granular control by enabling/disabling artifacts at the agent level rather than app-wide.

This feature introduces a layered Mixture-of-Agents architecture to LibreChat, where each agent takes all the outputs from agents in the previous layer as auxiliary information in generating its response, as described in [the eponymous "Mixture-of-Agents" paper](https://arxiv.org/abs/2406.04692).

### Advanced Settings

Advanced settings for your agent (found in the Advanced view of the Agent form) outside of "capabilities."

#### Max Agent Steps

This setting allows you to limit the number of steps an agent can take in a "run," which refers to the agent loop before a final response is given.

If left unconfigured, the default is 25 steps, but you can adjust this to suit your needs. For admins, you can set a global default as well as a global maximum in the [`librechat.yaml`](/docs/configuration/librechat_yaml/object_structure/agents#recursionlimit) file.

A "step" refers to either an AI API request or a round of tool usage (1 or many tools, depending on how many tool calls the LLM provides from a single request).

A single, non-tool response is 1 step. A singular round of tool usage is usually 3 steps:

1. API Request -> 2. Tool Usage (1 or many tools) -> 3. Follow-up API Request

## File Management

Agents support multiple ways to work with files:

### In Chat Interface

When chatting with an agent, you have four upload options:

1. **Upload Images**

- Uploads images for native vision model support

- Sends images directly to the model provider

2. **Upload as Text** (requires `context` capability)

- Extracts and includes full document content in conversation

- Uses text parsing by default; enhanced by OCR if configured

- Content exists only in current conversation

- See [Upload as Text](/docs/features/upload_as_text)

3. **Upload for File Search** (requires `file_search` capability, toggled ON)

- Uses semantic search (RAG) with vector stores

- Returns relevant chunks via tool use

- Optimal for large documents/multiple files

- Sub-optimal for structured data (CSV, Excel, JSON, etc.)

4. **Upload for Code Interpreter** (requires `execute_code` capability, toggled ON)

- Adds files to code interpreter environment

- Optimal for structured data (CSV, Excel, JSON, etc.)

- More info about [Code Interpreter](/docs/features/code_interpreter)

### In Agent Builder

When configuring an agent, you can attach files in different categories:

1. **Image Upload**: For visual content the agent can reference

2. **File Search Upload**: Documents for RAG capabilities

3. **Code Interpreter Upload**: Files for code processing

4. **File Context**: Documents with extracted text to supplement agent instructions

**File Context** uses the `context` capability and works just like ["Upload as Text"](/docs/features/upload_as_text) - it uses text parsing by default and is enhanced by OCR when configured. Text is extracted at upload time and stored in the agent's instructions. This is ideal for giving agents persistent knowledge from documents, PDFs, code files, or images with text.

**Processing priority:** OCR > STT > text parsing (same as Upload as Text)

**Note:** The extracted text is included as part of the agent's system instructions.

## Sharing and Permissions

### Administrator Controls

Administrators have access to global permission settings within the agent builder UI:

- Enable/disable agent sharing across all users

- Control agent usage permissions

- Manage agent creation rights

- Configure platform-wide settings

The first account created for your instance is an administrator. If you need to add an additional administrator, you may [access MongoDB](/docs/configuration/mongodb/mongodb_auth) and update the user's profile:

```

db.users.updateOne(

{ email: 'USER_EMAIL_ADDRESS' },

{ $set: { role: 'ADMIN' } }

)

```

The use of agents for all users can also be disabled via config, [more info](/docs/configuration/librechat_yaml/object_structure/interface).

### User-Level Sharing

Individual users can:

- Share their agents with all users (if enabled)

- Control editing permissions for shared agents

- Manage access to their created agents

### Notes

- Instructions, model parameters, attached files, and tools are only exposed to the user if they have editing permissions

- An agent may leak any attached data, whether instructions or files, through conversation--make sure your instructions are robust against this

- Only original authors and administrators can delete shared agents

- Agents are private to authors unless shared

## Optional Configuration

LibreChat allows admins to configure the use of agents via the [`librechat.yaml`](/docs/configuration/librechat_yaml) file:

- Disable Agents for all users (including admins): [more info](/docs/configuration/librechat_yaml/object_structure/interface)

- Customize agent capabilities using: [more info](/docs/configuration/librechat_yaml/object_structure/agents)

## Best Practices

- Provide clear, specific instructions for your agent

- Carefully consider which tools are necessary for your use case

- Organize files appropriately across the four upload categories

- Review permission settings before sharing agents

- Test your agent thoroughly before deploying to other users

## Recap

1. Select "Agents" from the endpoint dropdown menu

2. Open the Agent Builder panel

3. Fill out the required agent details

4. Configure desired capabilities (Code Interpreter, File Search, File Context, etc.)

5. Add necessary tools and files

6. Set sharing permissions if desired

7. Create and start using your agent

When chatting with agents, you can:

- Use "Upload as Text" to include full document content in conversations (text parsing by default, enhanced by OCR)

- Use "Upload for File Search" for semantic search over documents (requires RAG API)

- Add files to agent's "File Context" to included a file's full content as part of the agent's system instructions

## Migration Required (v0.8.0-rc3+)

Starting from version v0.8.0-rc3, LibreChat uses a new Access Control List (ACL) based permission system for agents. If you're upgrading from an earlier version, you must run the agent permissions migration for existing agents to remain accessible.

### What the Migration Does

The agent permissions migration transitions your agents from a simple ownership model to a sophisticated ACL-based system with multiple permission levels:

- **OWNER**: Full control over the agent

- **EDITOR**: Can view and modify the agent

- **VIEWER**: Read-only access to the agent

Without running this migration, existing agents will be inaccessible through the new permission-aware API endpoints.

### Running the Migration

Choose the appropriate command based on your deployment method:

#### 1. For the default `docker-compose.yml` (if you use `docker compose up` to start the app):

**Preview changes (dry run):**

```bash

docker compose exec api npm run migrate:agent-permissions:dry-run

```

**Execute migration:**

```bash

docker compose exec api npm run migrate:agent-permissions

```

**Custom batch size (for large datasets):**

```bash

docker compose exec api npm run migrate:agent-permissions:batch

```

#### 2. For the `deploy-compose.yml` (if you followed the [Ubuntu Docker Guide](/docs/remote/docker_linux)):

**Preview changes (dry run):**

```bash

docker exec -it LibreChat-API /bin/sh -c "cd .. && npm run migrate:agent-permissions:dry-run"

```

**Execute migration:**

```bash

docker exec -it LibreChat-API /bin/sh -c "cd .. && npm run migrate:agent-permissions"

```

**Custom batch size (for large datasets):**

```bash

docker exec -it LibreChat-API /bin/sh -c "cd .. && npm run migrate:agent-permissions:batch"

```

#### 3. For local development (from project root):

**Preview changes (dry run):**

```bash

npm run migrate:agent-permissions:dry-run

```

**Execute migration:**

```bash

npm run migrate:agent-permissions

```

**Custom batch size (for large datasets):**

```bash

npm run migrate:agent-permissions:batch

```

### What Happens During Migration

- **Private Agents**: Remain accessible only to their creators (receive OWNER permission)

- **Shared Agents**: If an agent was previously shared, it will receive appropriate ACL entries as a Public Agent (shared to all users)

- **System Detection**: LibreChat automatically detects unmigrated agents at startup and displays a warning

You can adjust the resulting agent permissions via the Agent Builder UI.

The same migration process applies to prompts. If you also have existing prompts, run the prompt permissions migration using the same commands but replace `agent` with `prompt` in the command names.

## Agents API (Beta)

LibreChat agents can also be accessed programmatically via API, enabling external applications and scripts to interact with your agents using OpenAI-compatible SDKs or the Open Responses format.

See the [Agents API documentation](/docs/features/agents_api) for setup and usage details.

## What's next?

LibreChat Agents usher in a new era for the app where future pipelines can be streamlined via Agents for specific tasks and workflows across your experience in LibreChat.

Future updates will include:

- General improvements to the current Agent experience

- Multi-agent orchestration for complex workflows

- Ability to customize agents for various functions: titling (chat thread naming), memory management (user context/history), and prompt enhancement (input assistance/predictions)

- More tools, configurable tool parameters, dynamic tool creation.

Furthermore, the update introduces a new paradigm for LibreChat, as its underlying architecture provides a much needed refresh for the app, optimizing both the user experience and overall app performance.

To highlight one notable optimization, an AI generation of roughly 1000 tokens will transfer about 1 MB of data using traditional endpoints (at the time of writing, any endpoint option besides Agents and AWS Bedrock).

Using an agent, the same generation will transfer about about 52 kb of data, a 95% reduction in data transfer, which is that much less of a load on the server and the user's device.

---

AI Agents in LibreChat provide a powerful way to create specialized assistants without coding knowledge while maintaining the flexibility to work with your preferred AI models and providers.

---

#LibreChat #AIAssistants #NoCode #OpenSource

# Agents API (Beta) (/docs/features/agents_api)

The Agents API is currently in beta. Endpoints, request/response formats, and behavior may change as we iterate toward a stable release.

LibreChat exposes your agents through two API-compatible interfaces, allowing external applications, scripts, and services to interact with your agents programmatically.

## Overview

The Agents API provides two interfaces:

- **OpenAI-compatible Chat Completions** — `POST /api/agents/v1/chat/completions`

- **Open Responses API** — `POST /api/agents/v1/responses`

Both are authenticated via API keys and support streaming responses, making it easy to integrate LibreChat agents into existing workflows that already use OpenAI SDKs or similar tooling.

LibreChat is adopting [Open Responses](https://www.openresponses.org/) as its primary API framework for serving agents. While the Chat Completions endpoint provides backward compatibility with existing OpenAI-compatible tooling, the Open Responses endpoint represents the future direction.

## Enabling the Agents API

The Agents API is gated behind the `remoteAgents` interface configuration. All permissions default to `false`.

```yaml filename="librechat.yaml"

interface:

remoteAgents:

use: true

create: true

```

See [Interface Configuration — remoteAgents](/docs/configuration/librechat_yaml/object_structure/interface#remoteagents) for all available options.

**Note:** Admin users have all remote agent permissions enabled by default.

## API Key Management

Once `remoteAgents.use` and `remoteAgents.create` are enabled, users can generate API keys from the LibreChat UI. These keys authenticate requests to the Agents API.

## Endpoints

### Chat Completions (OpenAI-compatible)

```

POST /api/agents/v1/chat/completions

```

Use any OpenAI-compatible SDK by pointing it at your LibreChat instance. The `model` parameter corresponds to an agent ID.

**Example with curl:**

```bash

curl -X POST https://your-librechat-instance/api/agents/v1/chat/completions \

-H "Authorization: Bearer YOUR_API_KEY" \

-H "Content-Type: application/json" \

-d '{

"model": "agent_abc123",

"messages": [

{"role": "user", "content": "Hello, what can you help me with?"}

],

"stream": true

}'

```

**Example with OpenAI SDK (Python):**

```python

from openai import OpenAI

client = OpenAI(

base_url="https://your-librechat-instance/api/agents/v1",

api_key="YOUR_API_KEY"

)

response = client.chat.completions.create(

model="agent_abc123",

messages=[{"role": "user", "content": "Hello!"}],

stream=True

)

for chunk in response:

print(chunk.choices[0].delta.content, end="")

```

### List Models

```

GET /api/agents/v1/models

```

Returns available agents as models. Useful for discovering which agents are accessible with your API key.

### Open Responses API

```

POST /api/agents/v1/responses

```

The Open Responses endpoint follows the [Open Responses specification](https://www.openresponses.org/), an open inference standard initiated by OpenAI and built by the open-source AI community. It is designed for agentic workflows with native support for reasoning, tool use, structured outputs, and streaming semantic events.

```bash

curl -X POST https://your-librechat-instance/api/agents/v1/responses \

-H "Authorization: Bearer YOUR_API_KEY" \

-H "Content-Type: application/json" \

-d '{

"model": "agent_abc123",

"input": "What is the weather today?"

}'

```

## Token Usage Tracking

All Agents API requests track token usage against the user's balance (when token spending is configured). Both input and output tokens are counted, including cache tokens for providers that support them (OpenAI, Anthropic).

## Roadmap

- **Open Responses as primary interface** — We plan to expand the Open Responses endpoint with full support for agentic loops, tool orchestration, and streaming semantic events.

- **Anthropic Messages API** — We may add support for the Anthropic Messages API format as an additional interface in the future.

## Related Documentation

- [Agents](/docs/features/agents) — Creating and configuring agents

- [Interface Configuration — remoteAgents](/docs/configuration/librechat_yaml/object_structure/interface#remoteagents) — Access control settings

- [Token Usage](/docs/configuration/token_usage) — Configuring token spending and balance

- [Open Responses Specification](https://www.openresponses.org/) — The open inference standard

# Artifacts - Generative UI (/docs/features/artifacts)

- **Generative UI:** Create React components, HTML code, and Mermaid diagrams

- **Flexible Integration:** Use any model you have set up

- **Iterative Design:** Rapidly improve and refine generated outputs

- **Agent Integration:** Configure artifacts at the agent level (recommended approach)

> **Note:** The preferred way to use artifacts is now through the [Agents feature](/docs/features/agents#artifacts), which allows for more granular control by enabling/disabling artifacts at the agent level rather than app-wide.

## Key Features of LibreChat's Code Artifacts:

- Instant prototyping of websites and UIs with generative AI

- Effortless creation of dynamic, responsive layouts

- Interactive learning environment for React and HTML

- Complex idea visualization using Mermaid diagrams

- AI-powered intuitive, user-centric design iterations

- Free and open-source alternative to paid AI tools

Experience the future of UI/UX design and development with LibreChat's generative capabilities. Bring your ideas to life faster than ever before!

## Content-Security-Policy

You may need to need to update your web server's Content-Security-Policy to include `frame-src 'self' https://*.codesandbox.io` in order to load generated HTML apps in the Artifacts panel. This is a dependency of the [sandpack](https://sandpack.codesandbox.io/) library.

## Self-Hosting the Sandpack Bundler

Artifacts in LibreChat use CodeSandbox's Sandpack library to securely render HTML/JS code. By default, LibreChat connects to CodeSandbox's public CDN, which may also transmit telemetry for its usage.

For enhanced privacy, security compliance, or isolated network environments, you can [self-host the bundler.](https://sandpack.codesandbox.io/docs/guides/hosting-the-bundler)

### Why Self-Host the Sandpack Bundler?

[Self-hosting the bundler](https://sandpack.codesandbox.io/docs/guides/hosting-the-bundler) provides several advantages:

- **Privacy & Security**: Keep code execution within your own infrastructure

- **Reliability**: Remove dependency on external services

- **Performance**: Reduce latency by hosting the bundler in your network

- **Compliance**: Meet organizational data handling requirements

- **Control**: Configure and customize to your specific needs

### Configuration

Once you have a self-hosted bundler set up, simply configure LibreChat to use it by setting the environment variable:

```bash

# `.env` file

SANDPACK_BUNDLER_URL=http://your-bundler-url

```

### General Considerations

Self-hosting the bundler introduces some additional overhead:

- **CORS Configuration**: You'll need to manage cross-origin resource sharing policies

- **Security Management**: You become responsible for the security of the bundler service

- **Maintenance**: Updates and patches will need to be applied manually

- **Resource Requirements**: Additional server resources will be needed to host the bundler

For detailed instructions on setting up and maintaining your self-hosted bundler, refer to the [forked CodeSandbox repository](https://github.com/LibreChat-AI/codesandbox-client) tailored for LibreChat deployment, including the removal of telemetry from Sandpack.

---

AI News 2024: The Original and BEST Open-Source AI Chat Platform, LibreChat Releases Code Artifacts! This Generative UI Tool Can Generate React, HTML & Diagrams Instantly from a Single Prompt in Your Browser with Any LLM, OpenAI, Anthropic, LLAMA, Mistral, DeepSeek Coder & More Thanks to OLLAMA Integration.

#AINews2024 #OpenSourceAI #GenerativeUI #CodeArtifacts #WebDevelopment #ReactJS #LLM #AIPrototyping #DevTools #OLLAMA #LibreChat

# Authentication (/docs/features/authentication)

LibreChat has a user authentication system that allows users to sign up and log in securely and easily. The system is scalable and can handle a large number of concurrent users without compromising performance or security.

By default, we have email signup and login enabled, which means users can create an account using their email address and a password. They can also reset their password if they forget it.

Additionally, our system can integrate social logins from various platforms such as Google, GitHub, Discord, OpenID, and more. This means users can log in using their existing accounts on these platforms, without having to create a new account or remember another password.

**For further details, refer to the configuration guides provided here: [Authentication](/docs/configuration/authentication)**

- When you run the app for the first time, you need to create a new account by clicking on "Sign up" on the login page. The first account you make will be the admin account. At the moment, the only privilege of the admin user is to control [agents](docs/features/agents#administrator-controls), prompts, and [memory](/docs/features/memory) permissions, and it will be useful for the admin dashboard to manage users and other settings later.

- The first account created should ideally be a local account (email and password).

# Code Interpreter API (/docs/features/code_interpreter)

## Introduction

LibreChat's Code Interpreter API provides a secure and hassle-free way to execute code and manage files through a simple API interface. Whether you're using it through LibreChat's Agents or integrating it directly into your applications, the API offers a powerful sandbox environment for running code in multiple programming languages.

## Subscription

**Access to this feature requires an [API subscription, get started here](https://code.librechat.ai/pricing).**

## Getting Started

1. Visit [code.librechat.ai](https://code.librechat.ai/pricing) to get your API key

2. Integrate the API into your application or use it through LibreChat

3. Start executing code and generating files securely

## Key Features

### Supported Languages

Execute code in multiple programming languages:

- Python, Node.js (JS/TS), Go, C/C++, Java, PHP, Rust, Fortran, Rscript

### Seamless File Handling

- Upload files for processing

- Download generated outputs

- Secure file management

- Session-based file organization

### Security & Convenience

- Secure sandboxed execution environment

- No local setup required

- No server deployment needed

- No configuration management

## Using the API

### In LibreChat

The API has first-class support in LibreChat through two main methods:

1. **[AI Agents](/docs/features/agents#code-interpreter)**: Enable Code Interpreter in your agent's configuration to allow it to execute code and process files automatically.

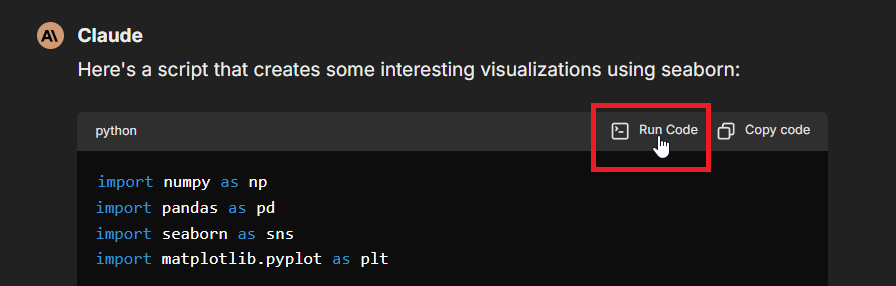

2. **Manual Execution**: Use the "Run Code" button in code blocks within the chat interface, as shown here:

### Set up API key

- **Per-user setup:** input your API key in LibreChat when prompted (using the above methods)

- **Global setup:** use `LIBRECHAT_CODE_API_KEY` environment variable in the .env file of your project (provides access to all users)

### Direct API Integration

The Code Interpreter API can be integrated into any application using a simple API key authentication:

1. Get your API key from [code.librechat.ai](https://code.librechat.ai/pricing)

2. Include the API key in your requests using the `x-api-key` header

### Enterprise

The enterprise plan requires use of the `LIBRECHAT_CODE_BASEURL` environment variable to correspond with the self-hosted instance of the API, along with any generated API keys from the dashboard, used the same way as mentioned above.

## Core Functionality

### Code Execution

- Run code snippets in supported languages

- Receive stdout/stderr output

- Get execution statistics (memory usage, CPU time)

- Handle program arguments

- Access execution status and results

### File Operations

- Upload input files

- Download generated outputs

- List available files

- Delete unnecessary files

- Manage file sessions

### Limitations

- Code cannot access the network

- Only 10 files can be generated per run

- Execution limits vary by plan:

- **Hobby**:

- 256 MB RAM per execution

- 25 MB per file upload

- 750 requests per month

- **Enthusiast**:

- 512 MB RAM per execution

- 50 MB per file upload

- 3,000 requests per month

- **Pro**:

- 512 MB RAM per execution

- 150 MB per file upload

- 7,000 requests per month

- The [Enterprise Plan](https://code.librechat.ai/pricing) provides custom limits and features

## Use Cases

- **Code Testing**: Test code snippets in multiple languages

- **File Processing**: Transform and analyze files programmatically

- **AI Applications**: Execute AI-generated code securely

- **Development Tools**: Build interactive coding environments

- **Objective Logic**: Verify code logic and correctness, improving AI models

## Why a Paid API?

While LibreChat remains free and open source under the MIT license, the Code Interpreter API is offered as a paid service for several key reasons:

1. **Project Sustainability**: Subscribing to an API plan provides direct support to the project's development, even more effectively than [GitHub Sponsors](https://github.com/sponsors/danny-avila). Your subscription helps ensure LibreChat's continued growth and improvement.

2. **Technical Considerations**: Including code execution capabilities in the core project would add significant complexity and hardware requirements that not all users need. The API service eliminates these concerns while maintaining a lightweight core application.

3. **Managed Service Benefits**:

- No complex configuration

- Immediate availability

- Regular updates and maintenance

- Professional support

- Secure, sandboxed environment

4. **Intellectual Property Protection**: The Code Interpreter's architecture represents significant innovation in secure, scalable sandbox technology. While similar solutions exist, they often lack the comprehensive security measures and scalability features that make this implementation unique. Keeping this component as a closed-source API helps protect these innovations and ensures the service maintains its high security and performance standards.

Even if you only use code execution occasionally, subscribing helps support LibreChat's development while enhancing your experience with professional-grade features. It's a win-win that keeps the core project free while offering optional advanced capabilities for those who need them.

---

## Conclusion

The Code Interpreter API provides a secure, convenient way to execute code and manage files without the hassle of setting up and maintaining execution environments. Whether you're using it through LibreChat's Agents or integrating it directly into your applications, it offers a robust solution for code execution needs.

For detailed technical specifications and API reference, please visit our [API Documentation](https://code.librechat.ai/docs).

#LibreChat #CodeExecution #API #Development

# Forking Chats (/docs/features/fork)

import Image from 'next/image'

## Why Fork Conversations?

Think of forking like creating a new path in your chat - it's super handy when you want to:

### Keep Things On Track

Sometimes you'll hit on an interesting side topic but don't want to derail your main conversation. Forking lets you dive into that rabbit hole while keeping your original chat clean

### Play "What If"

Want to see how different approaches might play out? Fork the chat and try various angles. It's like having multiple parallel conversations, each exploring a different possibility

### Save Important Context

When you fork, you can bring along as much or as little of the previous chat as you need. This way, you're not starting completely from scratch, and the AI still has the background it needs

### Share Specific Parts

Need to show someone just part of your conversation? Instead of copying and pasting or sharing your whole chat history, you can fork just the relevant bit and share that

### Keep Ideas Organized

Long chats can spawn lots of different ideas. Rather than letting them get tangled together, you can fork each one into its own thread and develop them separately

### Try Different Approaches

Sometimes you might wonder if there's a better way to ask something. Forking lets you experiment with different ways of talking to the AI without messing up your main conversation

## Forking Options

Use these settings to fork messages with the desired behavior.

Forking refers to creating a new conversation that starts/ends from specific messages in the current conversation, creating a copy according to the options selected.

The "target message" refers to either the message the popup was opened from, or, if you check "Start fork here", the latest message in the conversation.

### Visible messages only:

This option forks only the visible messages; in other words, the direct path to the target message, without any branches.

### Include related branches:

This option forks the visible messages, along with related branches; in other words, the direct path to the target message, including branches along the path.

### Include all to/from here (default):

This option forks all messages leading up to the target message, including its neighbors; in other words, all message branches, whether or not they are visible or along the same path, are included.

## Additional Options

- **Start fork here:** If checked, forking will commence from this message to the latest message in the conversation, according to the behavior selected above.

- **Remember:** Check to remember the options you select for future usage, making it quicker to fork conversations as preferred.

- Alternatively you can control the default behavior in the settings menu:

# Image Generation & Editing (/docs/features/image_gen)

## Quick Start

Get image generation working in under 5 minutes with OpenAI Image Tools (recommended).

### Create an Agent

Go to the **Agents** panel in LibreChat and create a new agent. Give it a name like "Image Creator".

### Add OpenAI Image Tools

In the agent's **Tools** list, select **OpenAI Image Tools**. This adds both image generation and image editing capabilities.

### Set Your API Key

Add the following to your `.env` file:

```bash filename=".env"

IMAGE_GEN_OAI_API_KEY=sk-your-openai-api-key

```

### Restart and Test

| Deployment | Command |

|------------|---------|

| Docker | `docker compose down && docker compose up -d` |

| Local | Stop (Ctrl+C) then `npm run backend` |

Send a message like "Generate an image of a sunset over mountains" to your agent.

---

LibreChat comes with **built-in image tools** that you can add to an **[Agent](/docs/features/agents).**

Each has its own look, price-point, and setup step (usually just an API key or URL).

| Tool | Best for | Needs |

|------|----------|-------|

| **OpenAI Image Tools** | Cutting-edge results (GPT-Image-1).

Can also ***edit*** the images you upload. | OpenAI API |

| **Gemini Image Tools** | Google's latest image models with context-aware generation. | Gemini API or Vertex AI |

| **DALL·E (3 / 2)** | Legacy OpenAI Image models. | OpenAI API |

| **Stable Diffusion** | Local or self-hosted generation, endless community models. | Automatic1111 API |

| **Flux** | Fast cloud renders, optional fine-tunes. | Flux API |

| **MCP** | Bring-your-own-Image-Generators | MCP server with image output support |

**Notes:**

- API keys can be omitted in favor of allowing the user to enter their own key from the UI.

- Image Outputs are directly sent to the LLM as part of the immediate chat context following generation.

- The LLM will only get vision context from images attached to user messages, and not from generations/edits, except for immediately after generation.

- See [Image Storage and Handling](#image-storage-and-handling) for more details.

- MCP Server tool image outputs are supported, which may output images similarly to LC's built-in tools.

- Note: MCP servers may or may not use the correct format when outputting images. See details in the [MCP section below](#5--model-context-protocol-mcp).

---

## 1 · OpenAI Image Tools (recommended)

### Features

"OpenAI Image Tools" are an agent toolkit made up of 2 separate tools.

- **Image Generation**:

- **Create** brand-new images from text prompts (no upload required).

- **Image Editing**:

- **Edit** or **remix** the images you just uploaded—change colours, add objects, extend the canvas, etc.

- Both use OpenAI's latest image generation model, **GPT-Image-1**, for superior instruction following, text rendering, detailed editing, real-world knowledge

- See OpenAI's [Image Generation documentation](https://platform.openai.com/docs/guides/image-generation?image-generation-model=gpt-image-1) for more details.

#### Generation vs. Editing

| Use-case | Invokes |

|----------|---------------|

| "Start from scratch" | **Image Generation** |

| "Use existing image(s)" | **Image Editing** |

The agent decides which tool to use based on the context:

- **Image Generation** creates brand new images from text descriptions only

- **Image Editing** modifies or remixes existing images using their image IDs

- These can be images from the current message or previously generated/referenced images

- The LLM keeps track of image IDs as long as they remain in the context window

- Includes the referenced image IDs in the tool output

- Both tools are always available, but the LLM will choose the appropriate one based on the user's request

- Both tools will include the generated image ID in the tool output

⚠️ **Important**

- Image editing relies on image IDs, which are retained in the chat history.

- When files are uploaded to the current request, their image IDs are added to the context of the LLM before any tokens are generated.

- Previously referenced or generated image IDs can be used for editing, as long as they remain within the context window.

- The LLM can include any relevant image IDs in the `image_ids` array when calling the image editing tool.

- You can also attach previously uploaded images from the side panel without needing to upload them again.

- This also has the added benefit of providing a vision model with the image context, which can be useful for informing the `prompt` for the image editing tool.

### Parameters

#### Image Generation

• **prompt** – text description (required)

• **size** – `auto` (default), `1024x1024` (square), `1536x1024` (landscape), or `1024x1536` (portrait)

• **quality** – `auto` (default), `high`, `medium`, or `low`

• **background** – `auto` (default), `transparent`, or `opaque` (transparent requires PNG or WebP format)

#### Image Editing

• **image_ids** – array of image IDs to use as reference for editing (required)

• **prompt** – text description of the changes (required)

• **size** – `auto` (default), `1024x1024`, `1536x1024`, `1024x1536`, `256x256`, or `512x512`

• **quality** – `auto` (default), `high`, `medium`, or `low`

### Setup

Create or reuse an OpenAI key and add to `.env`:

```bash

IMAGE_GEN_OAI_API_KEY=sk-...

# optional extras

IMAGE_GEN_OAI_BASEURL=https://...

```

For Azure OpenAI deployments, you will first need access: https://aka.ms/oai/gptimage1access

Then, add your corresponding credentials to your `.env` file:

```bash

IMAGE_GEN_OAI_API_KEY=your-api-key

# optional extras

IMAGE_GEN_OAI_BASEURL=https://deploymentname.openai.azure.com/openai/deployments/gpt-image-1/

IMAGE_GEN_OAI_AZURE_API_VERSION=2025-04-01-preview

```

Then add "OpenAI Image Tools" to your Agent's *Tools* list.

### Advanced Configuration

You can customize the tool descriptions and prompt guidance by setting these environment variables:

```bash

# Image Generation Tool Descriptions

IMAGE_GEN_OAI_DESCRIPTION=...

IMAGE_GEN_OAI_PROMPT_DESCRIPTION=...

# Image Editing Tool Descriptions

IMAGE_EDIT_OAI_DESCRIPTION=...

IMAGE_EDIT_OAI_PROMPT_DESCRIPTION=...

```

### Pricing

See the [GPT-Image-1 pricing page](https://platform.openai.com/docs/models/gpt-image-1) and [Image Generation Documentation](https://platform.openai.com/docs/guides/image-generation?image-generation-model=gpt-image-1#cost-and-latency) for details on costs associated with image generation.

---

## 2 · Gemini Image Tools

Gemini Image Tools integrate Google's latest image generation models, supporting both text-to-image generation and image context-aware editing.

### Features

- **Text-to-Image Generation**: Create high-quality images from detailed text descriptions

- **Image Context Support**: Use existing images as context or inspiration for new generations

- **Image Editing**: Generate new images based on modifications to existing ones (include original image ID)

- **Multiple Models**: Choose between `gemini-2.5-flash-image` (default) or `gemini-3-pro-image-preview`

- **Dual API Support**: Works with both simple Gemini API keys and Google Cloud Vertex AI

### Parameters

• **prompt** – Detailed text description of the desired image (required, up to 32,000 characters)

• **image_ids** – Optional array of image IDs to use as visual context for generation

### Setup

#### Option 1: Gemini API (Recommended)

Get an API key from [Google AI Studio](https://aistudio.google.com/app/apikey):

```bash

GEMINI_API_KEY=your_api_key_here

```

#### Option 2: Vertex AI (Enterprise)

For Google Cloud users with Vertex AI access:

```bash

GOOGLE_SERVICE_KEY_FILE=/path/to/service-account.json

GOOGLE_CLOUD_LOCATION=us-central1 # optional, default: global

```

### Model Selection

```bash

# Default model (fast and efficient)

GEMINI_IMAGE_MODEL=gemini-2.5-flash-image

# Higher quality model

GEMINI_IMAGE_MODEL=gemini-3-pro-image-preview

```

### Advanced Configuration

Customize tool descriptions via environment variables:

```bash

GEMINI_IMAGE_GEN_DESCRIPTION=...

GEMINI_IMAGE_GEN_PROMPT_DESCRIPTION=...

GEMINI_IMAGE_IDS_DESCRIPTION=...

```

More details can be found in the dedicated [Gemini Image Gen guide](/docs/configuration/tools/gemini_image_gen).

---

## 3 · DALL·E (legacy)

DALL·E provides high-quality image generation using OpenAI's legacy image models.

### Parameters

• **prompt** – Text description of the desired image (required, up to 4000 characters)

• **style** – `vivid` (hyper-real, dramatic - default) or `natural` (less hyper-real)

• **quality** – `standard` (default) or `hd`

• **size** – `1024x1024` (default/square), `1792x1024` (wide), or `1024x1792` (tall)

### Setup

```bash

# Required

DALLE_API_KEY=sk-... # or DALLE3_API_KEY=sk-...

# Optional

DALLE_REVERSE_PROXY=https://... # Alternative endpoint

DALLE3_BASEURL=https://... # For Azure or custom endpoints

DALLE3_AZURE_API_VERSION=2023-12-01-preview # For Azure deployments

DALLE3_SYSTEM_PROMPT=... # Custom system prompt for DALL·E

```

### Advanced Configuration

For Azure OpenAI deployments, configure both the base URL and API version:

```bash

DALLE3_BASEURL=https://your-resource-name.openai.azure.com/openai/deployments/your-deployment-name

DALLE3_AZURE_API_VERSION=2023-12-01-preview

DALLE3_API_KEY=your-azure-api-key

```

Enable the **DALL·E** tool for the Agent and start prompting.

### Pricing

See the [DALL-E pricing page](https://platform.openai.com/docs/models/dall-e-3) and [Image Generation Documentation](https://platform.openai.com/docs/guides/image-generation?image-generation-model=dall-e-3) for details on costs associated with image generation.

---

## 4 · Stable Diffusion (local)

Run images entirely on your own machine or server.

Point LibreChat at any Automatic1111 (or compatible) endpoint and you're set.

### Parameters

• **prompt** – Detailed keywords describing desired elements in the image (required)

• **negative_prompt** – Keywords describing elements to exclude from the image (required)

The Stable Diffusion implementation uses these default parameters:

- cfg_scale: 4.5

- steps: 22

- width: 1024

- height: 1024

These values are currently fixed but provide good results for most use cases.

### Setup

```bash

SD_WEBUI_URL=http://127.0.0.1:7860 # URL to your Automatic1111 WebUI

```

No API key required—just the reachable URL.

More details on setting up Automatic1111 can be found in the dedicated [Stable Diffusion guide](/docs/configuration/tools/stable_diffusion).

---

## 5 · Flux

Cloud generator with an emphasis on speed and optional fine-tuned models.

### Features

- Fast cloud-based image generation

- Support for fine-tuned models

- Multiple quality levels and aspect ratios

- Raw mode for less processed, more natural-looking images

### Parameters

The Flux tool supports three main actions:

1. **generate** - Create a new image from a text prompt

2. **generate_finetuned** - Create an image using a fine-tuned model

3. **list_finetunes** - List available custom models for the user

More details can be found in the dedicated [Flux guide](/docs/configuration/tools/flux#parameters).

### Setup

```bash

FLUX_API_KEY=flux_live_...

FLUX_API_BASE_URL=https://api.us1.bfl.ai # default is fine for most users

```

Choose the **Flux** tool inside the Agent. Prompts are plain text; one call produces one image.

### Pricing

See the [Flux pricing page](https://docs.bfl.ml/pricing/) for details on costs associated with image generation.

---

## 6 · Model Context Protocol (MCP)

Image outputs are supported from MCP servers.

For example, the [Puppeteer MCP Server](https://github.com/modelcontextprotocol/servers/tree/main/src/puppeteer) can be used to generate screenshots of web pages, which correctly output the image in the expected format and is treated the same as LC's built-in image tools.

> The examples below assume LibreChat is running outside of Docker, directly using Node.js. The Model Context Protocol is a relatively new framework, and many developers are still learning how to properly serve their systems with uv/node for scalable distribution.

> As this technology is still emerging, there are currently few image-generating servers available, and many existing ones have yet to adopt the correct response format for images.

> While many MCP servers do function well within Docker, the following examples do not, or not without more advanced configurations, showcasing some of the current inconsistency between MCP servers.

```yaml

mcpServers:

puppeteer:

command: npx

args:

- -y

- "@modelcontextprotocol/server-puppeteer"

```

The following is an example of an [Image Generation server](https://github.com/GongRzhe/Image-Generation-MCP-Server) that outputs images using [Replicate API](https://replicate.com/account/api-tokens), but returns URLs of the images, which doesn't conform to MCP's image response standard.

> Note: for this particular server, you need to install the `@gongrzhe/image-gen-server` package globally using npm, i.e. `npm install -g @gongrzhe/image-gen-server`, then point to the package's compiled files as shown below.

```yaml

mcpServers:

image-gen:

command: "node"

# First, install the package globally using npm:

# `npm install -g @gongrzhe/image-gen-server`

# Then, point to the location of the installed package,

# which you can find by running `npm root -g`

args:

- "{REPLACE_WITH_NODE_MODULES_LOCATION}/@gongrzhe/image-gen-server/build/index.js"

# Example with output from `npm root -g`:

# - "/home/danny/.nvm/versions/node/v20.19.0/lib/node_modules/@gongrzhe/image-gen-server/build/index.js"

env:

# Do not hardcode the API token here, use the environment variable instead

# The following will pick up the token from your .env file or environment

REPLICATE_API_TOKEN: "${REPLICATE_API_TOKEN}"

MODEL: "google/imagen-3"

```

---

## Image Storage and Handling

All generated images are:

1. Saved according to the configured [**`fileStrategy`**](/docs/configuration/librechat_yaml/object_structure/config#filestrategy)

2. Displayed directly in the chat interface

3. Image tool outputs are directly sent to the LLM as part of the immediate chat context following generation.

- This may create issues if you are using an LLM that does not support image inputs.

- There will be an option to disable this behavior on a per-agent-basis in the future.

- These outputs are only directly sent to the LLM upon generation, not on every message.

- To include the image in the chat, you can directly attach it to the message from the side panel.

- To summarize, the LLM will only get vision context from images attached to user messages, and not from generations/edits, except for immediately after generation.

---

## Proxy Support

All image generation tools support proxy configuration through the `PROXY` environment variable:

```bash

PROXY=http://proxy-url:port

```

## Error Handling

If any of the tools encounter an error, they will return an error message explaining what went wrong. Common issues include:

- Invalid API key

- API unavailability

- Content policy violations

- Proxy/network issues

- Invalid parameters

- Unsupported image payload (see [Image Storage and Handling](#image-storage-and-handling) above)

---

## Prompting

Though you can customize the prompts for [OpenAI Image Tools](#advanced-configuration) and [DALL·E](#advanced-configuration-1), the following tips inform the default prompts supplied by the tools, which is helpful to know for your own writing/prompting.

1. Start with the **subject** and **style** (photo, oil painting, etc.).

2. Add **composition** and **camera / medium** ("wide-angle shot of…", "watercolour…").

3. Mention **lighting & mood** ("golden hour", "dramatic shadows").

4. Finish with **detail keywords** (textures, colours, expressions).

5. Keep negatives positive—describe what should be included, not what to avoid.

Example: