Langfuse Tracing

This document explains how to integrate Langfuse tracing with LibreChat to get full observability into your AI conversations.

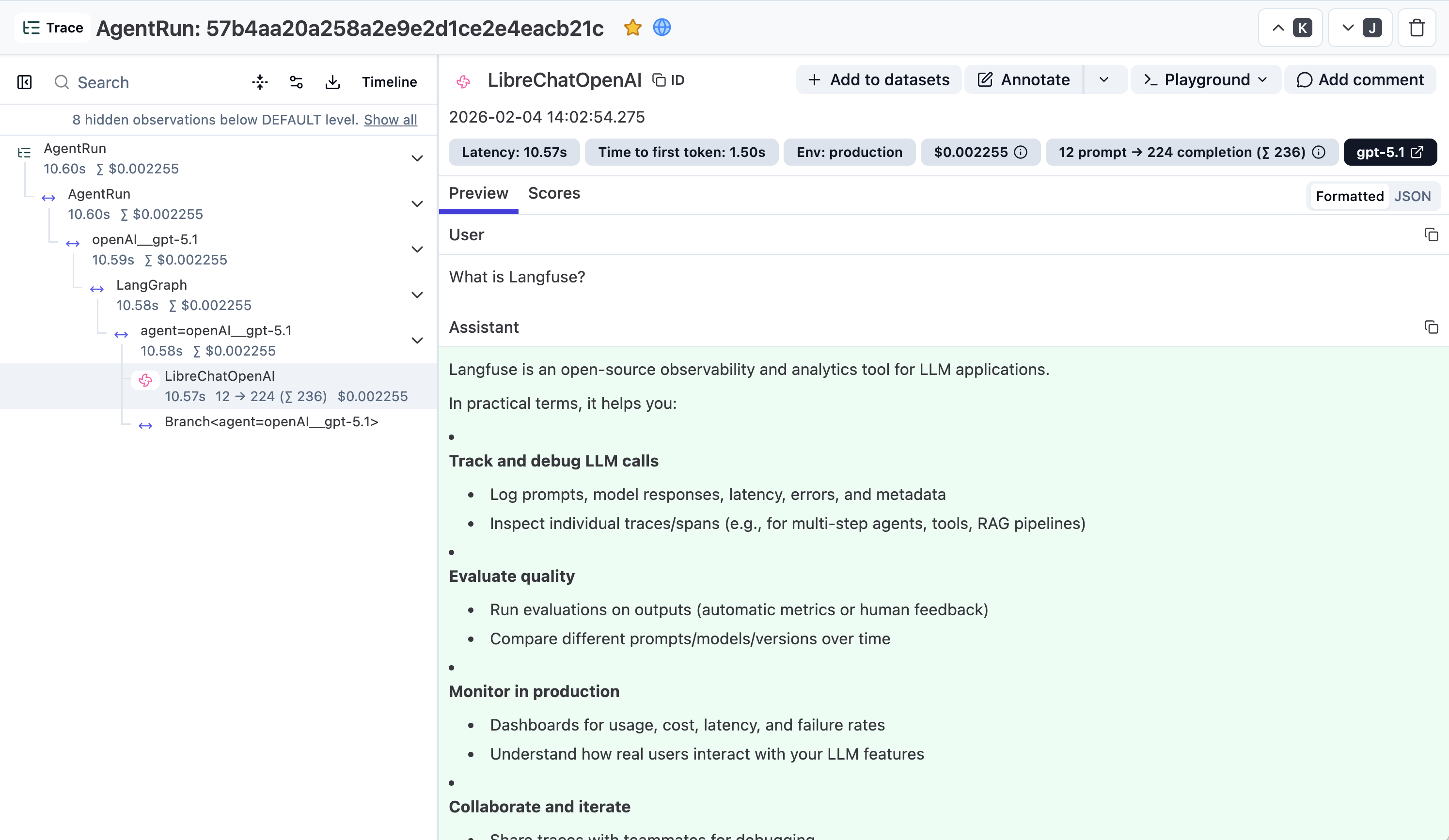

Langfuse is an open-source LLM observability platform that helps you trace, monitor, and debug your LLM applications. By integrating Langfuse with LibreChat, you get full visibility into your AI conversations.

Prerequisites

Before you begin, ensure you have:

- A running LibreChat instance (see Quick Start)

- A Langfuse account (sign up for free)

- Langfuse API keys from your project settings

Setup

Add the following Langfuse-related environment variables to your .env file in your LibreChat installation directory:

| Key | Type | Description | Example |

|---|---|---|---|

| LANGFUSE_PUBLIC_KEY | string | Your Langfuse public key. | LANGFUSE_PUBLIC_KEY=pk-lf-*** |

| LANGFUSE_SECRET_KEY | string | Your Langfuse secret key. | LANGFUSE_SECRET_KEY=sk-lf-*** |

| LANGFUSE_BASE_URL | string | The Langfuse API base URL. | LANGFUSE_BASE_URL=https://cloud.langfuse.com |

Example Configuration

Self-Hosted Langfuse

For self-hosted Langfuse instances, set LANGFUSE_BASE_URL to your custom URL (e.g., http://localhost:3000 for local development).

Restart LibreChat

After adding the environment variables, restart your LibreChat instance to apply the changes:

See Traces in Langfuse

Once LibreChat is restarted with Langfuse configured, you will see a new trace for every chat message response in the Langfuse UI:

How is this guide?